The Universe Is a Language

The fluorescent lights in the Princeton physics library hummed at sixty hertz — a frequency most people stopped hearing after a few minutes, but that Bekenstein could never quite tune out. It was 1972. The building smelled like chalk dust and old carpet and the particular staleness that accumulates in rooms where people think harder than they move. He sat at a table near the back, surrounded by yellow legal pads covered in equations he kept crossing out.

Jacob Bekenstein was twenty-five years old, Israeli-born, quiet in the way that people who are always running calculations in their head tend to be quiet. His advisor was John Wheeler — the same Wheeler who had coined the phrase "black hole," who had trained Feynman, who walked the halls of Fine Hall like a man who knew where the edge of knowledge was and enjoyed standing on it. Wheeler liked students who asked uncomfortable questions. Bekenstein had one that wouldn't let him sleep.

The question was about a book.

Not a specific book. Any book. He kept imagining throwing one into a black hole. It was the kind of thought experiment that sounds like a party trick until you realize it breaks physics.

Here is the problem, and it is not small: there is a law in physics that says information cannot be destroyed. This is not a suggestion. It is not a guideline. It is load-bearing architecture — the kind of principle that, if it fails, the entire structure of thermodynamics collapses behind it. If you burn a book, the information in its pages still exists, theoretically, in the pattern of smoke and ash and radiated heat. The data is scrambled beyond any practical recovery, but it is there. Conservation of information. Absolute. Non-negotiable.

But black holes eat everything and return nothing. That was the whole point of them. Matter falls past the event horizon and vanishes from the observable universe. So: throw the book in. The black hole swallows it. The information — every word, every idea, every arrangement of atoms that made the pages distinguishable from blank paper — is gone. Not scrambled. Not hidden. Gone.

Either the black hole was violating a law that everything else in physics obeyed, or there was something about black holes that everyone had missed.

Bekenstein sat with this for months. The other graduate students were working on problems that had solutions — calculable, publishable, the kind of work that becomes a thesis and then a tenure case and then a career. Bekenstein was chasing something that most of the department thought was either trivial or nonsense. Black holes, in 1972, were still half-theoretical. The mathematics said they existed. Nobody had seen one. And here was this quiet kid from Israel insisting that a black hole had to have entropy — a measurable quantity of internal disorder — or the second law of thermodynamics was broken.

The pushback was immediate and personal. Stephen Hawking, already ascending toward his own kind of scientific sainthood, argued publicly that Bekenstein was wrong. The objection was elegant and cruel in the way physics objections tend to be: if a black hole has entropy, then it must also have a temperature. If it has a temperature, it must radiate. But black holes, by definition, emit nothing. Therefore, Bekenstein was confused.

What happened next is one of the great reversals in the history of science. Hawking sat down to disprove Bekenstein and instead proved him right. The mathematics showed that black holes do radiate — a faint quantum glow now called Hawking radiation — and that their entropy is real, measurable, and proportional to the surface area of the event horizon. Not the volume. The surface. The two-dimensional skin of the three-dimensional abyss.

The formula was beautiful. It was also, for anyone willing to follow it to its conclusion, terrifying.

What the Formula Actually Says

Strip away the Greek letters and the physical constants and listen to what the equation is telling you. It says the information content of a black hole is encoded not in its volume but on its surface. Three dimensions of stuff, fully described by two dimensions of data.

This is not a metaphor. It is not an analogy. It is a mathematical result with consequences that reach out and grab the throat of every assumption physics had been running on since Newton.

The first consequence is the Holographic Principle. If everything inside a black hole is described by its surface, then perhaps everything inside any bounded region of space is described by its boundary. Scale that up. The implication — pursued later by 't Hooft and Susskind — is that the entire three-dimensional universe might be a projection of information stored on a distant two-dimensional surface. Reality as hologram. Not as poetry. As physics.

The second consequence is the Resolution Limit. Bekenstein's formula doesn't stretch to infinity. When you push the math to its smallest scale, you hit a floor: the Planck length, roughly 1.6 × 10⁻³⁵ meters. Below this, the formula stops making sense. Space itself becomes grainy. Pixelated. There is a smallest possible unit of area, and therefore a smallest possible unit of information that any region of space can hold.

The universe, in other words, is not smooth. It is not continuous. It is not analog. At the bottom of everything, reality is digital. It has a pixel size. It has a resolution. And like any system with a finite resolution, it has a maximum file size — the Bekenstein Bound — an absolute cap on how much data any physical system can contain.

The Silence After the Discovery

Here is the part the textbooks leave out.

Bekenstein published. The formula was confirmed. Hawking, to his considerable credit, publicly acknowledged that Bekenstein had been right and he had been wrong. The physics was settled. The entropy of a black hole is real, it is proportional to the surface area, and the universe is quantized at the Planck scale.

And then — nothing.

Not nothing in the sense of inactivity. Papers were written. Conferences were held. The Holographic Principle became a cottage industry. String theorists used it. Loop quantum gravity people used it. Everyone took what they needed and went back to their corners.

But the question — the uncomfortable one, the one that Bekenstein's formula screamed if you listened — went largely unasked.

If the universe is digital, what is it running on?

If reality has a maximum file size, who set the limit?

If information is fundamental — more fundamental than matter, more fundamental than energy, the actual substrate of existence — then what wrote it?

Physics, as a discipline, has a well-practiced response to questions like these. The response is: shut up and calculate. This phrase captures a real and valuable methodological commitment. Don't ask what the wave function means. Don't ask why the constants have the values they do. Calculate what happens, predict the outcome, test the prediction. If the math works, the math works. Meaning is someone else's department.

For decades, this approach delivered. Quantum electrodynamics predicted the magnetic moment of the electron to twelve decimal places. The Standard Model cataloged every known particle. The math worked. It worked so well that asking why began to feel not just unscientific but almost rude — like interrupting a surgeon to ask about their childhood.

But Bekenstein's formula sits in an awkward place. It works. The predictions hold. And it says, in the language of mathematics, that reality is made of information — organized, bounded, structured information with a definite resolution and a maximum capacity. The formula doesn't tell you what the information means. It doesn't tell you who organized it. It hands you a result that raises more questions than it answers, and then physics collectively agrees to talk about something else.

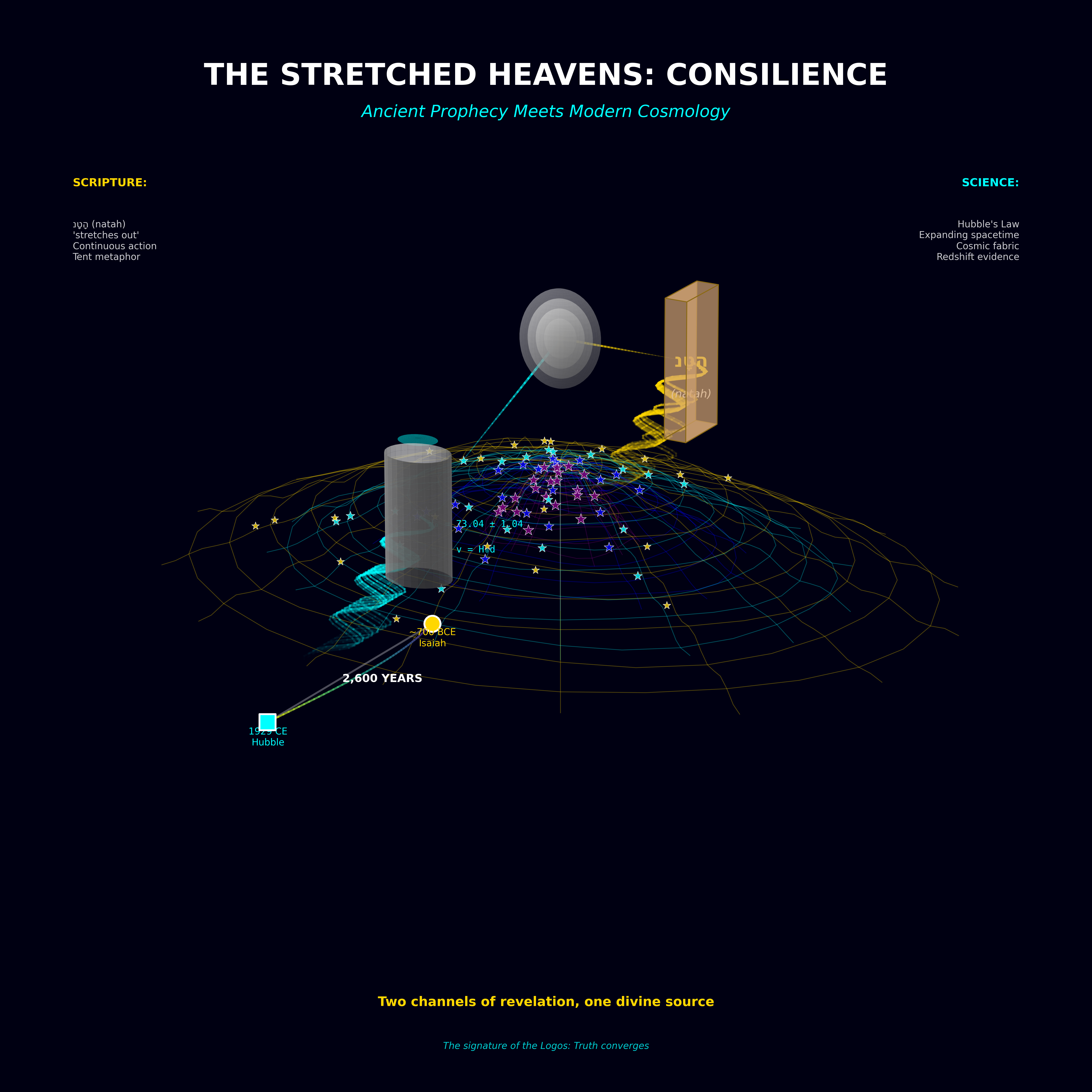

The Universe as Language

There is a way of reading Bekenstein's discovery that the "shut up and calculate" tradition specifically avoids.

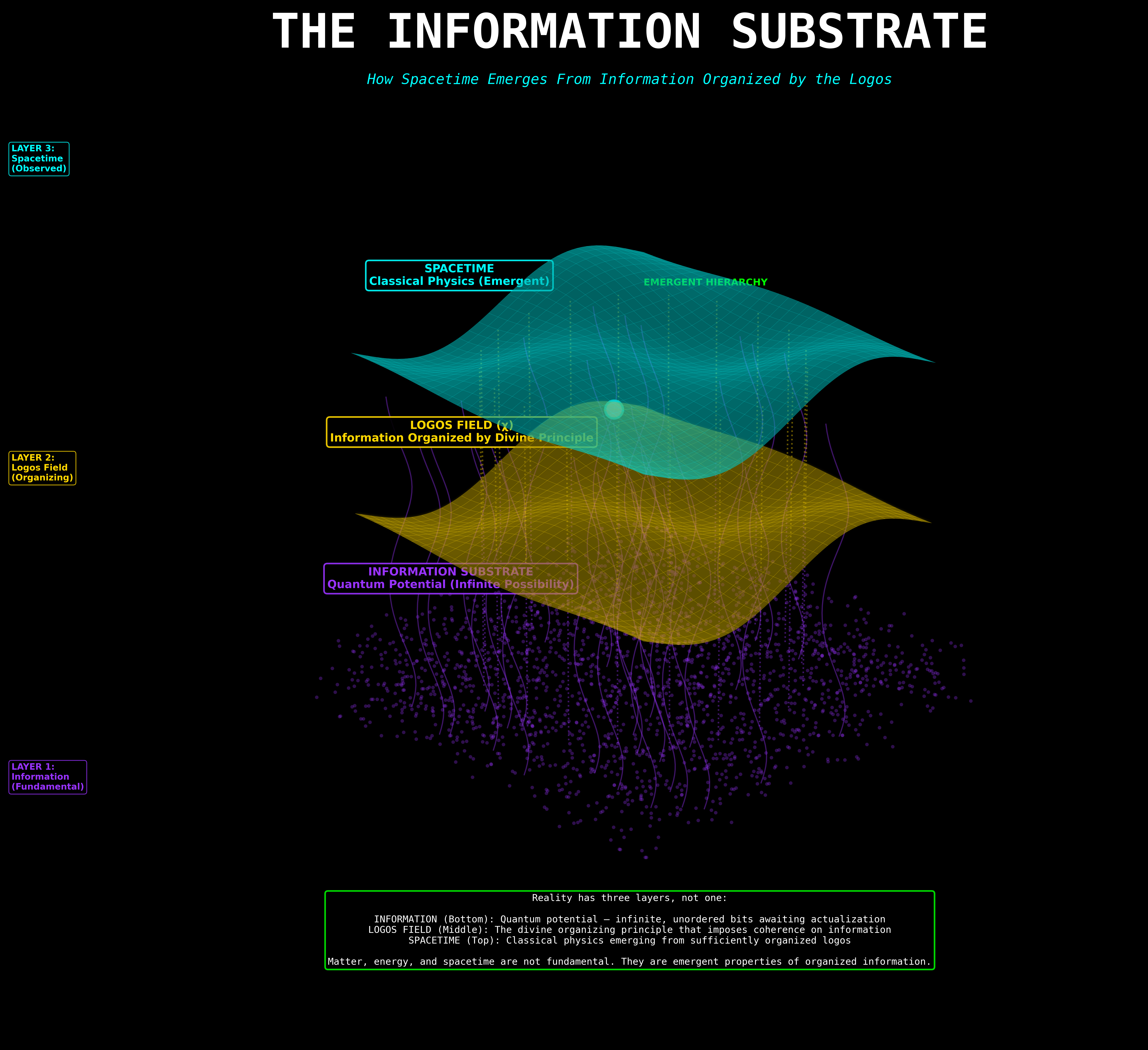

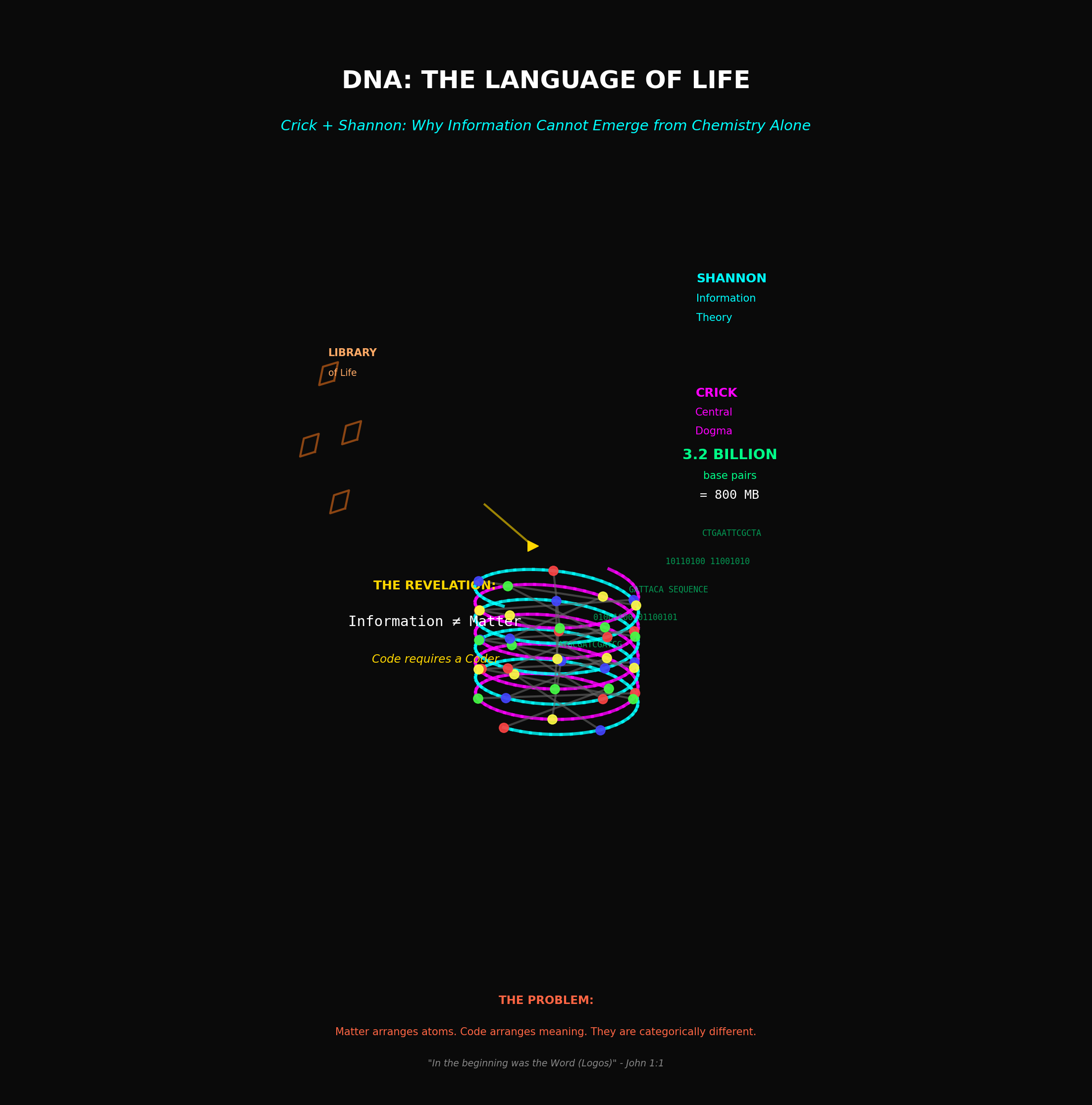

If reality is information, and information is organized, then the universe is not fundamentally stuff — not matter, not energy, not fields vibrating in a void. The universe is fundamentally language. It is structured communication. It has syntax (the laws of physics). It has grammar (the mathematical relationships between those laws). It has a vocabulary (the elementary particles and forces). And it has a resolution — a Planck-scale pixel grid that sets the precision of every sentence the universe can write.

Science spent three centuries assuming the question was: what are the laws that govern matter?

Bekenstein showed that the question is: what is the language that defines reality?

This is not a mystical claim. It is the direct, unadorned reading of the mathematics. The Bekenstein Bound is a data cap. The Planck length is a pixel size. The holographic principle says the information is the thing, and the physical world is the projection.

And yet — and this is where the discomfort lives, where it has lived since 1972 — if the universe is a language, then the deepest question in physics is not about forces or particles or fields. It is about the speaker. Languages do not write themselves. Codes do not emerge from noise. A Bekenstein Bound implies a boundary condition, and a boundary condition implies something that set it.

Bekenstein himself was not loud about this implication. He was a physicist, not a theologian, and he understood the professional consequences of crossing that line. But the formula doesn't care about professional consequences. It sits there, confirmed to every decimal place anyone has ever tested, and it says: the foundation of reality is structured information with a finite resolution and a maximum capacity.

That's not a universe made of things. That's a universe made of words.

And if the universe is a language, then somewhere — before the first word was spoken, before the first bit collapsed into a definite state — there was a Logos.

Not a metaphor. A mathematical necessity.

The Universal Computer

Edward Fredkin was not supposed to be in physics.

He had dropped out of Caltech after a year, joined the Air Force, learned to fly jets and write code for the early warning radar systems that were supposed to tell America if the Soviets launched. He was good at two things that didn't normally go together: machines and abstraction. He could build the hardware and he could see through it to the logic underneath. By the time he ended up running the MIT Information Mechanics Group in the 1980s — a position he essentially invented for himself — he had already made and lost fortunes in computing, held a professorship at MIT without a PhD, and developed the habit of saying things at conferences that made half the room furious and the other half unable to sleep for a week.

The thing Fredkin said that changed everything was simple enough to write on a napkin: the universe is a computer.

Not like a computer. Not analogous to a computer. A computer. A cellular automaton — a grid of discrete cells, each one updating its state based on the states of its neighbors, step by step, tick by tick, following a set of rules that nobody programmed into it because nobody knows where the rules came from.

He said this in rooms full of people who had spent their careers describing the universe with smooth, continuous differential equations — the calculus of Newton and Leibniz, the field equations of Maxwell and Einstein, the wave mechanics of Schrödinger. These were the tools that had delivered nuclear energy and semiconductors and GPS satellites. And here was this dropout with a pilot's license telling them that all of it was just a very good approximation of something fundamentally different.

The Logic of the Machine

The argument was not mystical. Fredkin was not a mystic. He was an engineer who had noticed something that the physicists kept overlooking because they were trained not to look for it.

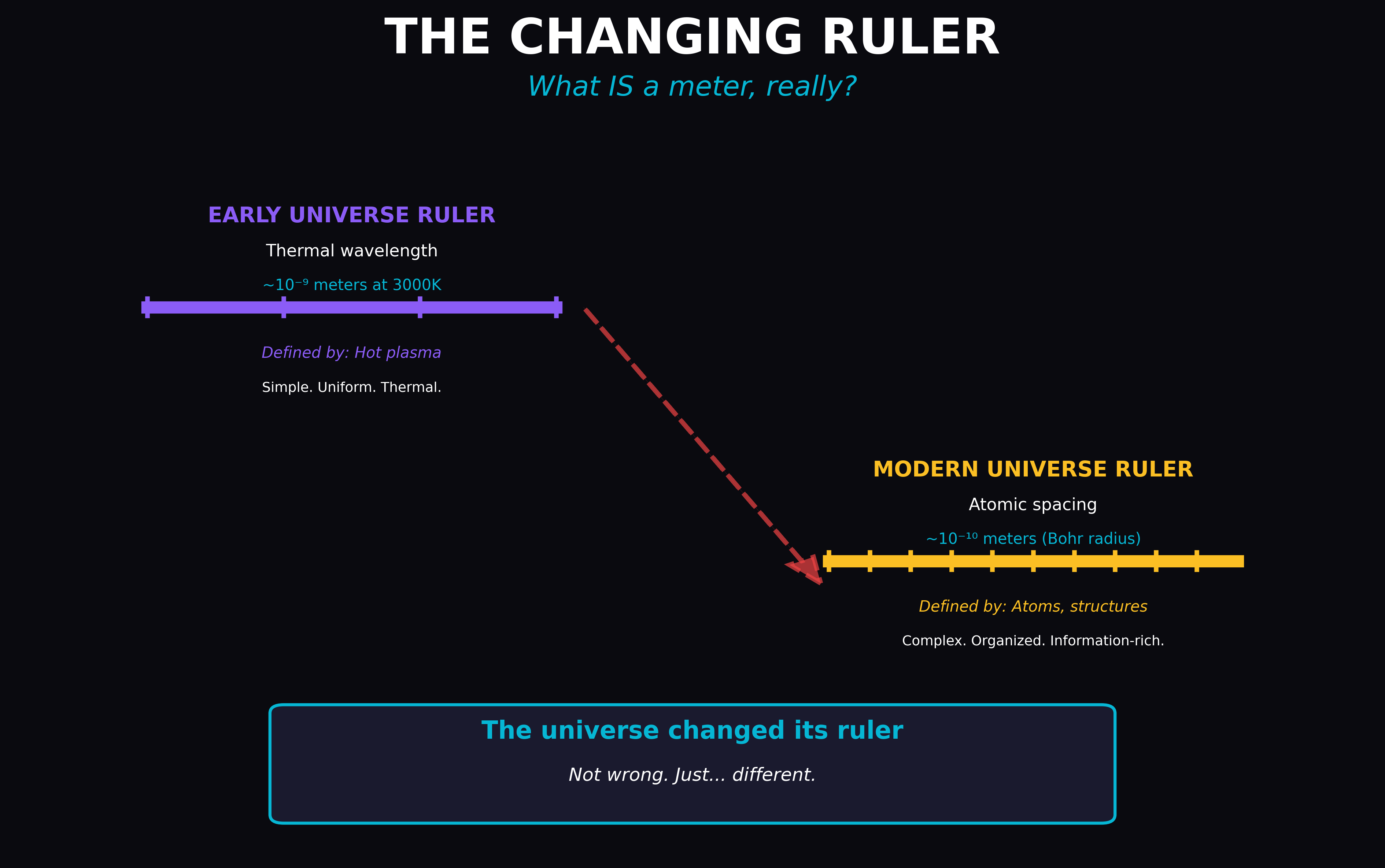

Bekenstein had already shown — and by the early 1980s, this was settled physics — that space is digital at the Planck scale. There is a smallest unit of area. A pixel. A resolution limit. The universe is not smooth. It has grain.

Fredkin's move was the obvious next step, obvious in the way that things are obvious only after someone says them out loud: if reality is digital, then reality is computational. Digital systems process information. They run. They execute. The pixelation Bekenstein discovered isn't just a property of space — it's a signature. It tells you what kind of system you're looking at.

A digital system that evolves in discrete time steps according to local rules is called a cellular automaton. The most famous example is Conway's Game of Life — a grid of black and white cells that follows four simple rules about how many neighbors a cell has. From those four rules, staggeringly complex structures emerge: patterns that move, replicate, compute. The Game of Life can simulate a Turing machine. It can, in principle, run any algorithm that any computer can run. Four rules. Infinite complexity.

Fredkin argued that the universe works the same way, just with better resolution and a more interesting ruleset.

The Fredkin Gate

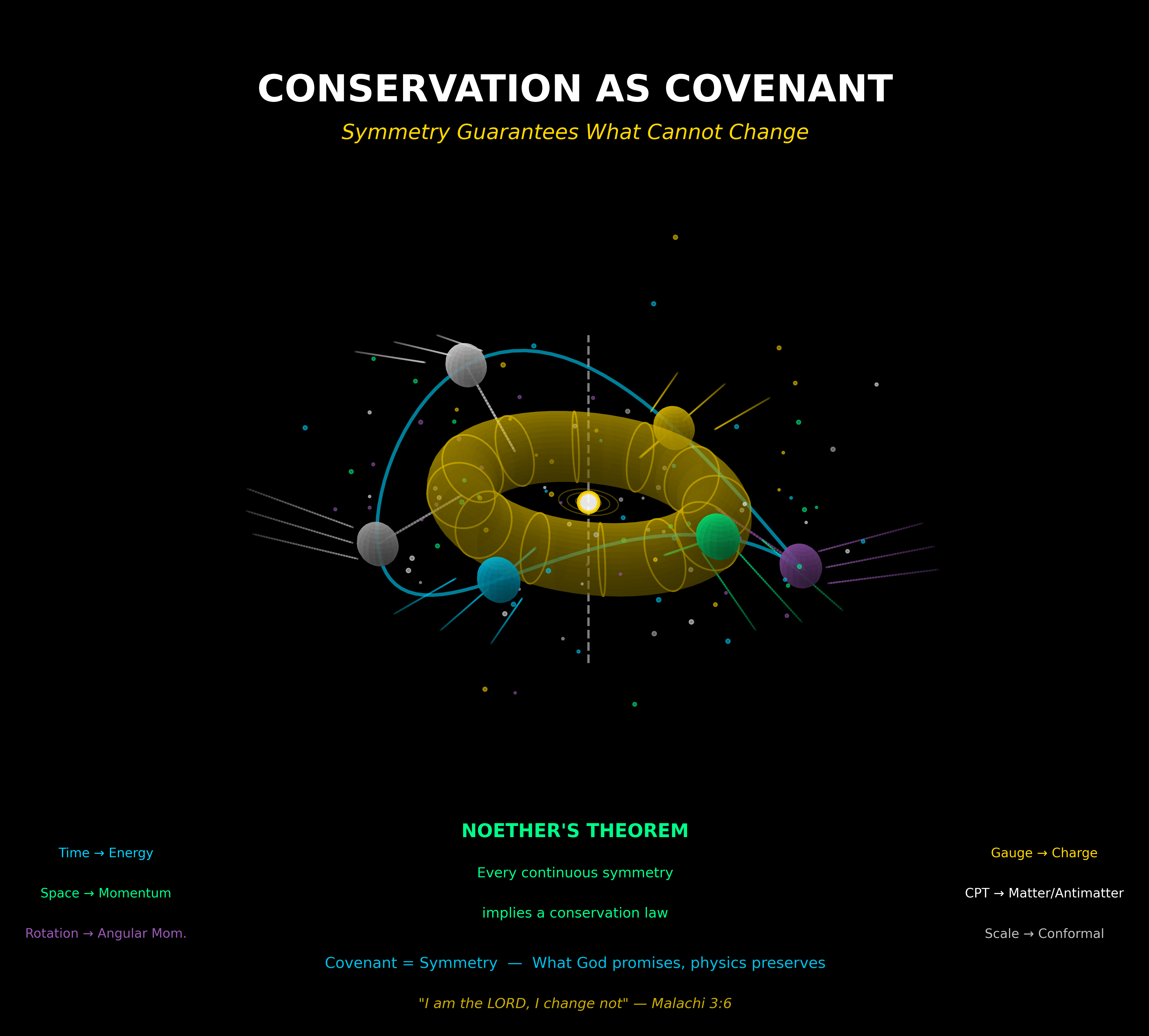

But Fredkin didn't just argue. He built something.

The problem with ordinary computation — the kind that happens inside every laptop and server and phone — is that it's irreversible. When an AND gate takes two input bits and produces one output bit, information is lost. You can't run the gate backward and recover the inputs from the output. This loss is not free. Landauer's Principle, formulated in 1961, proved that erasing one bit of information requires a minimum expenditure of energy and produces a minimum increase in entropy. Every irreversible operation generates heat. Every calculation costs something.

If the universe were an irreversible computer, it would be drowning in waste heat. Every physical interaction — every particle collision, every photon absorbed and re-emitted — would be an irreversible computation, and the entropy cost would be catastrophic. The universe would burn itself up calculating its own next state.

But it doesn't. Physical processes at the fundamental level are reversible. Run the equations of motion backward and they still work. Time-reverse a particle interaction and it obeys the same laws. The universe, whatever it is doing, is not paying the Landauer tax.

Fredkin solved this by demonstrating that reversible computation is possible. The Fredkin Gate — a three-input, three-output logic gate — performs computation without destroying information. No bit is ever erased. The operation can be run backward perfectly. The inputs can always be recovered from the outputs.

This was not a theoretical curiosity. It meant that a computer built entirely from Fredkin Gates could, in principle, compute forever without generating entropy. A perfect, frictionless calculating machine. And if the universe is such a machine — if physical reversibility is not a coincidence but a design signature — then Fredkin had identified the logic architecture of reality.

The Part Nobody Wanted to Hear

Fredkin presented this work at physics conferences throughout the 1980s and 1990s. The response followed a pattern that Bekenstein would have recognized.

The mathematics was not attacked. The Fredkin Gate works. Reversible computation is real. The cellular automaton model is formally consistent with known physics at the scales where it has been tested. Nobody stood up and said the math is wrong because the math isn't wrong.

What people said instead was: so what?

This is the "shut up and calculate" defense deployed in its purest form. The argument goes: even if the universe is a cellular automaton, we already have perfectly good equations that describe its behavior. The continuous approximation works. Why do we need to know what's underneath? What does it buy us?

What it buys you is the same thing Bekenstein's formula bought you — a conclusion you can't unthink once you've thought it.

If the universe is a computer, it is running a program. A cellular automaton does not write its own rules. The rules precede the execution. They define which states are possible, which transitions are allowed, which patterns can emerge and which cannot. Conway didn't discover the Game of Life by watching a blank grid and waiting for something to happen. He chose the rules. Then he pressed start.

The rules of physics — the specific values of the gravitational constant, the speed of light, the charge of the electron, the whole carefully tuned orchestra of constants that permits atoms and chemistry and stars and consciousness — are the program. They were in place before the first tick of the cosmic automaton. They are not outputs of the computation. They are inputs.

And inputs require an input source.

Fredkin, unlike Bekenstein, was not shy about this implication. He said, publicly and repeatedly, that the existence of the computational substrate implied the existence of an intelligence that wrote the code. He called this hypothetical entity "the programmer" — a word that made theologians suspicious and physicists angry and everyone else confused about whether he was serious.

He was serious. The mathematics didn't give him a choice.

The Unfinished Sentence

But here is what Fredkin could not do, and what his work — for all its elegance — leaves dangling like an incomplete equation.

He could tell you the universe is a computer. He could show you the gate. He could point to the reversibility and the digital substrate and the cellular automaton architecture. What he could not tell you is what the program means.

A cellular automaton computes. It does not intend. It processes. It does not understand. The Game of Life generates gliders and blinkers and still lifes and infinitely complex patterns — but it does not know it is doing any of this. There is no awareness inside the grid. The rules execute. The cells update. The clock ticks.

If the universe is a cellular automaton, then where does consciousness come from? Where does meaning come from? The reversible gate preserves information perfectly — but information about what, and for whom?

Fredkin identified the machine. He proved it was real, or at least that its architecture was consistent with everything we know about physics. And then, having built the machine, he found himself standing in front of it with the same question Bekenstein had faced: the math works, the predictions hold, and the result raises more questions than it answers.

The universe is a computer. The program is elegant. The computation is reversible. And the next line of the proof — the line that says what the program computes, and why, and for whom — remains unwritten.

Bekenstein found the hardware.

Fredkin found the software.

Neither found the programmer.

That required a different kind of physicist — one who was willing to put the observer back inside the equation and ask what happens when the computer becomes aware of itself.

It From Bit

By the time John Archibald Wheeler turned seventy, he had done enough to earn the right to say something crazy. He had worked on the Manhattan Project with Niels Bohr. He had mentored Richard Feynman — not just supervised, but shaped the mind that reshaped quantum electrodynamics. He had co-written the definitive textbook on general relativity. He had coined the term "black hole" in a lecture in 1967 and watched the phrase take over the language of physics like a virus. He had earned every credential the discipline could offer, and he had earned them honestly, through decades of calculation and insight and the unglamorous work of being right about things that were hard to be right about.

So when Wheeler stood up at a conference in 1989 and said three words that sounded more like philosophy than physics, the room did not laugh. The room listened. Some of the people in the room wished they could unhear it.

The three words were: It from Bit.

"It" meant every physical thing. Every particle, every force, every field, every galaxy. Every object that physics had ever measured, modeled, or predicted. "Bit" meant a binary choice — yes or no, on or off, one or zero. The smallest possible unit of information. A single answer to a single question.

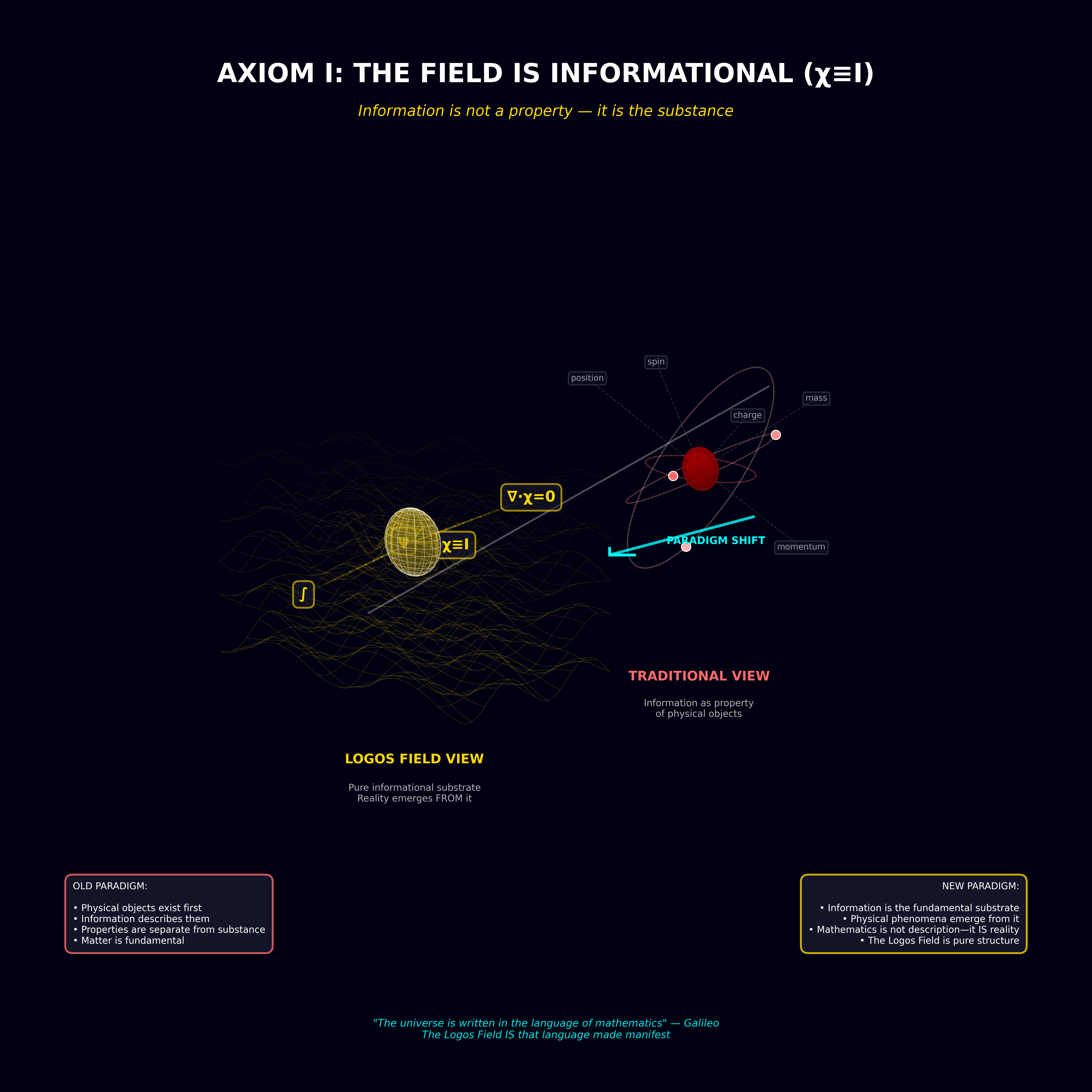

Wheeler was claiming that the physical world — all of it, the entire "It" — arises from information. Not carries information. Not encodes information. Is information. The bits come first. The stuff comes second.

The Problem Wheeler Was Solving

Wheeler was not being poetic. He was trying to resolve a problem that had been eating quantum mechanics alive for sixty years.

The problem is measurement.

In quantum mechanics, a particle does not have definite properties until it is observed. Before measurement, an electron exists in superposition — a mathematical cloud of possibilities described by a wave function. It is not at any particular location. It does not have a definite spin. It is a probability distribution, smeared across every state it could possibly occupy.

Then someone measures it.

The wave function collapses. Instantly. The cloud of possibilities vanishes and the electron snaps into a single, definite state. Location: here. Spin: up. The measurement didn't reveal a pre-existing fact. It created the fact. Before the question was asked, there was no answer.

This is not an interpretation. It is the mathematics. The Schrödinger equation that describes quantum evolution is smooth, continuous, deterministic. The collapse that occurs during measurement is sudden, discontinuous, and irreversible. These two behaviors do not fit together. They are described by different mathematics. The smooth evolution says one thing. The collapse says another. And for sixty years — from the Bohr-Einstein debates through the formulation of decoherence theory and the many-worlds interpretation — nobody had explained how or why the transition happens.

Bohr said: don't ask. The measurement is the measurement. We describe it, we don't explain it.

Everett said: it doesn't happen. The wave function never collapses. The universe splits.

Wheeler said: you're all looking at it backward. The collapse isn't a problem to be solved. It's the mechanism. It's how reality gets built.

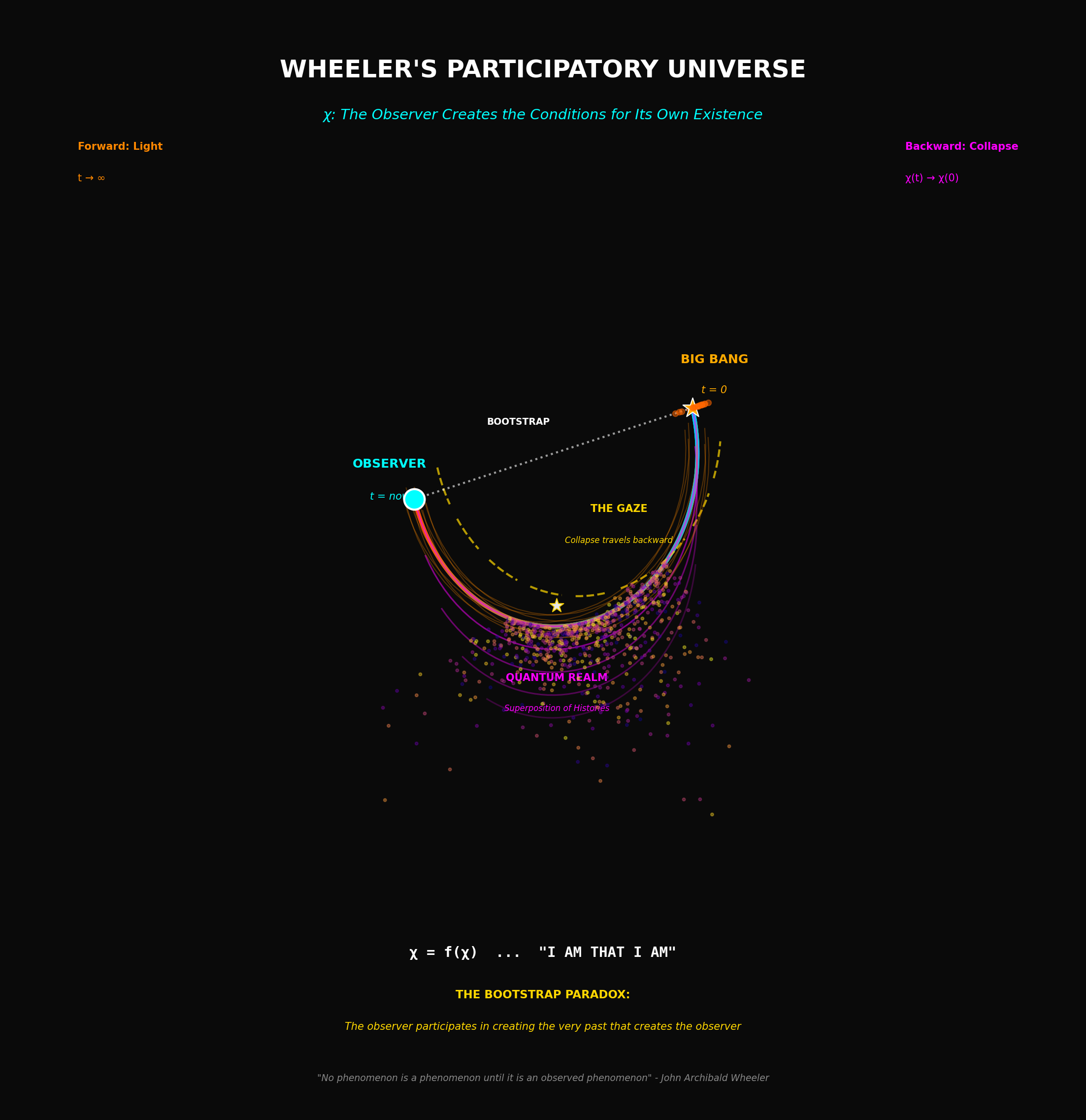

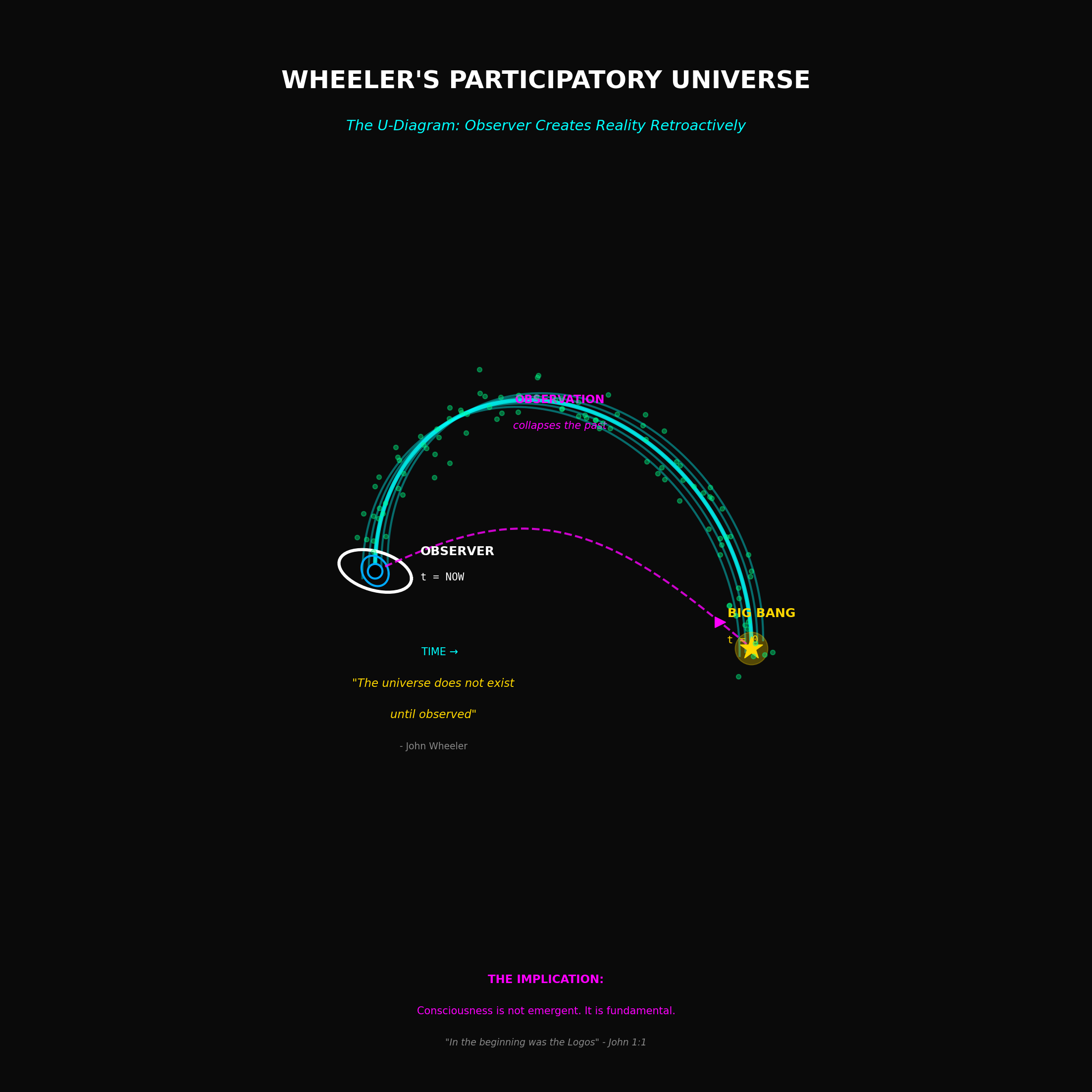

The Participatory Universe

Wheeler's argument went like this.

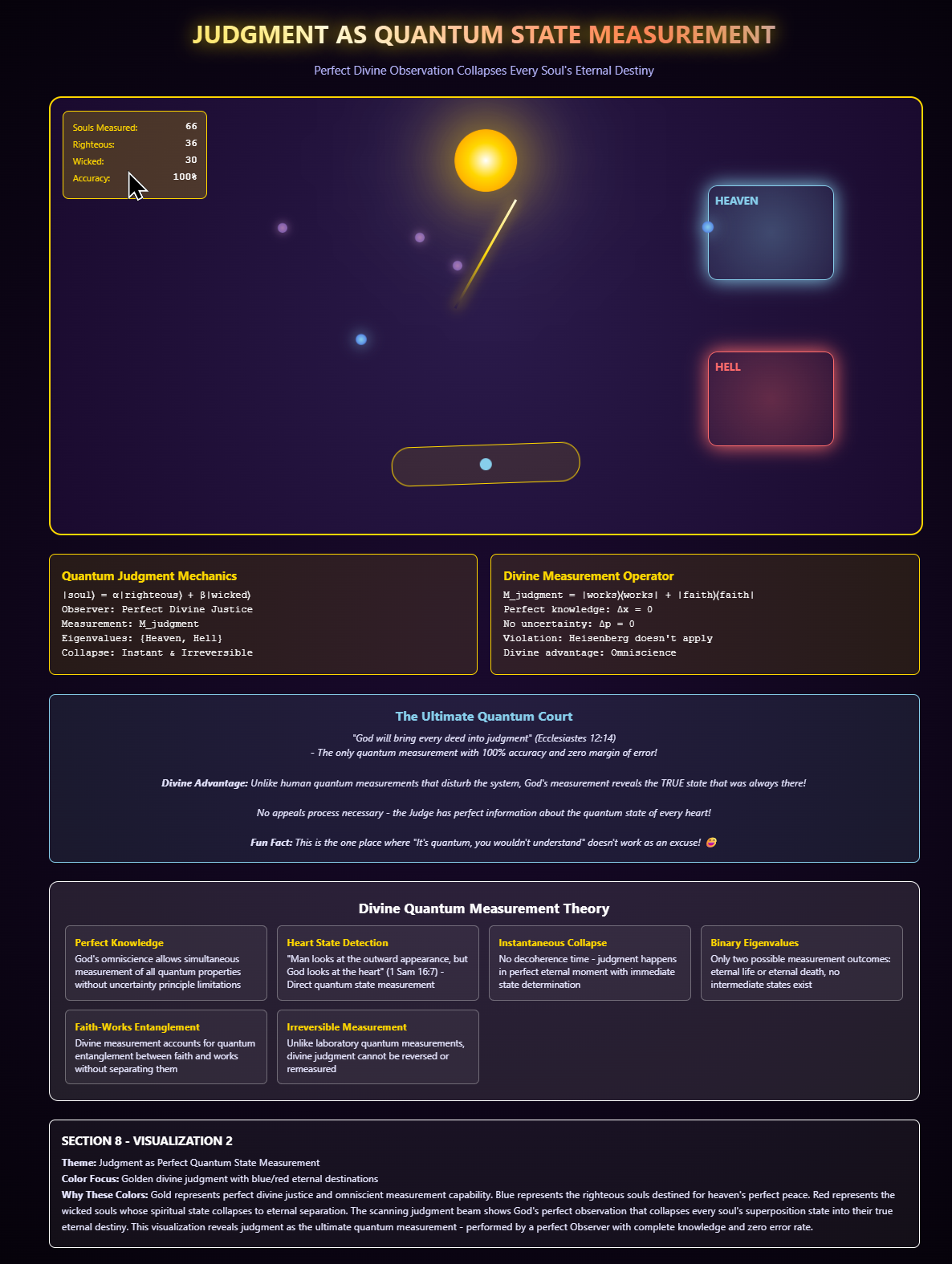

Every measurement is a binary choice. You ask the electron a yes-or-no question — "Are you here or not?" — and the universe answers. One bit of information is created. That bit is now part of the physical world. It is real in the most concrete sense the word can carry.

Scale that up. Every physical interaction is, at bottom, a measurement — one system extracting information from another. Atoms interact with photons. Particles scatter off particles. Fields couple to fields. At every point, at every moment, binary questions are being asked and answered, and each answer adds one more bit to the structure of reality.

This is "It from Bit." The physical world is not a pre-existing stage on which information plays out. The physical world is the accumulated record of every measurement that has ever occurred. The bits build the It.

But Wheeler pushed further — into territory that most of his colleagues would have preferred to leave unexplored.

If reality is created by measurement, and measurement requires an observer — something that asks the question and registers the answer — then the universe requires observers. Not as accidental byproducts. Not as irrelevant passengers. As participants. As the mechanism through which the bits are generated and the It comes into being.

Wheeler called this the Participatory Anthropic Principle. The name sounds like philosophy. The content is physics. Without observers, there are no measurements. Without measurements, there are no bits. Without bits, there is no It. The universe that produces consciousness is the same universe that requires consciousness in order to exist.

This is a loop. Wheeler knew it was a loop. He drew it — a U-shaped diagram with an eye at one end, looking back at the origin of the universe at the other end, the observation in the present participating in the creation of the past. He called it the "self-excited circuit." The universe bringing itself into being through the act of observing itself.

What Wheeler Could Not Close

And here — again — the anticlimactic pattern.

Wheeler published. The phrase "It from Bit" entered the vocabulary. Physicists who work on quantum information cite it constantly. It appears in textbook introductions and conference keynotes and the kind of popular science writing that makes the general public feel like physics is almost saying something about God but stops just short.

But the argument was never completed. Wheeler identified the mechanism — measurement creates reality — and he identified the requirement — observers are necessary — and then he stopped. Or rather, the discipline stopped. The question that "It from Bit" screams at the top of its lungs — who asked the first question? — was acknowledged as interesting and then filed under "philosophical implications" and left there.

Think about what the logic actually demands. If reality is built from bits, and bits are generated by measurements, and measurements require observers, then before the first observer evolved, before the first measurement occurred, before the first bit was generated — what was there? A wave function with no one to collapse it. A superposition with no one to ask it a question. An ocean of possibility with no shore.

Wheeler's self-excited circuit says the observer loops back and participates in the creation of the past. This is not impossible in quantum mechanics — the delayed-choice quantum eraser experiment, which Wheeler himself proposed, demonstrates that the choice of what to measure now can retroactively determine what happened then. The experiment has been performed. It works. The retrocausality is real.

But retrocausality only pushes the question back one level. The loop still needs a first term. The circuit still needs to close. If the observer creates the bit and the bit creates the It and the It creates the observer — then somewhere, at some point, something broke the symmetry. Something asked the first question. Something collapsed the first wave function. Something spoke the first bit into existence and started the chain.

Wheeler never named it. He was too careful a physicist and too aware of what naming it would cost him professionally. But the mathematics he left behind has a hole in it exactly the shape of a first cause — a Logos that spoke and, in speaking, created the information from which everything else was built.

The Three Pillars

Three physicists. Three discoveries. One conclusion that none of them were willing to state plainly and all of them pointed toward.

Bekenstein found the hardware: the universe is digital, with a finite resolution and a maximum information density. The substrate is quantized. Reality has a pixel size.

Fredkin found the software: the universe is computational, running on reversible logic that preserves information perfectly. The architecture is a cellular automaton. The program executes.

Wheeler found the operator: the universe is participatory, requiring conscious observers to collapse possibility into actuality. The bits don't generate themselves. Someone has to ask the question.

Hardware that is digital. Software that is reversible. An operating principle that requires a mind.

Each of these discoveries was confirmed. Each was celebrated. Each raised a question that the next discovery made more urgent. And after all three — after the pixel was found and the gate was built and the observer was identified as essential — physics arrived at a threshold it has been standing at ever since, looking across at a conclusion it can see clearly but has no professional vocabulary to articulate.

If the universe is a language, there must be a speaker.

If the universe is a program, there must be a programmer.

If the universe requires an observer, there must be a first observer.

The Logos is not a metaphor. It is the mathematical name for the entity that Bekenstein's bound implies, Fredkin's gate requires, and Wheeler's circuit cannot close without.

The next question — the one that separates physics from theology, or reveals that they were never separate — is what that Logos looks like when it enters the system it created.

The Great Schism

The 20th century ended with physics broken in two. On one side stood Albert Einstein and his towering masterpiece, General Relativity, which perfectly describes the universe of stars, planets, and galaxies — the macro-cosmos. On the other side stood Quantum Mechanics, a set of rules that perfectly describes the world of subatomic particles — the micro-cosmos.

The problem is that these two pillars of knowledge are absolutely, fundamentally incompatible. When you use the mathematics of General Relativity to calculate what happens inside a quantum system (like a Black Hole singularity or the instant of the Big Bang), the equations break down. They give meaningless results.

It is as if the universe is governed by two different languages, and they refuse to speak to each other. This is the Great Schism — the failure of four generations of brilliant minds to find the single Theory of Everything that can unify gravity and the quantum world.

The Incompatibility: Smooth vs. Jumpy

The core of the conflict lies in the nature of space and energy they describe. General Relativity treats space-time as smooth and continuous — a fabric that bends under mass, like a rubber sheet, infinitely divisible. Quantum Mechanics treats it as jumpy and quantized — energy, momentum, and position existing in discrete packets called quanta.

General Relativity is deterministic: if you know the initial conditions, you can perfectly predict the future. Quantum Mechanics is probabilistic: you can only predict the likelihood of an outcome. The future is uncertain.

To describe the whole universe, we need a theory that can handle both the smooth bend of gravity and the sudden, probabilistic jump of a quantum particle. When physicists try to unify them — for example, by quantizing gravity — the mathematical approximations required generate infinitely large, nonsensical answers. The system rejects the attempt to unify them under current rules.

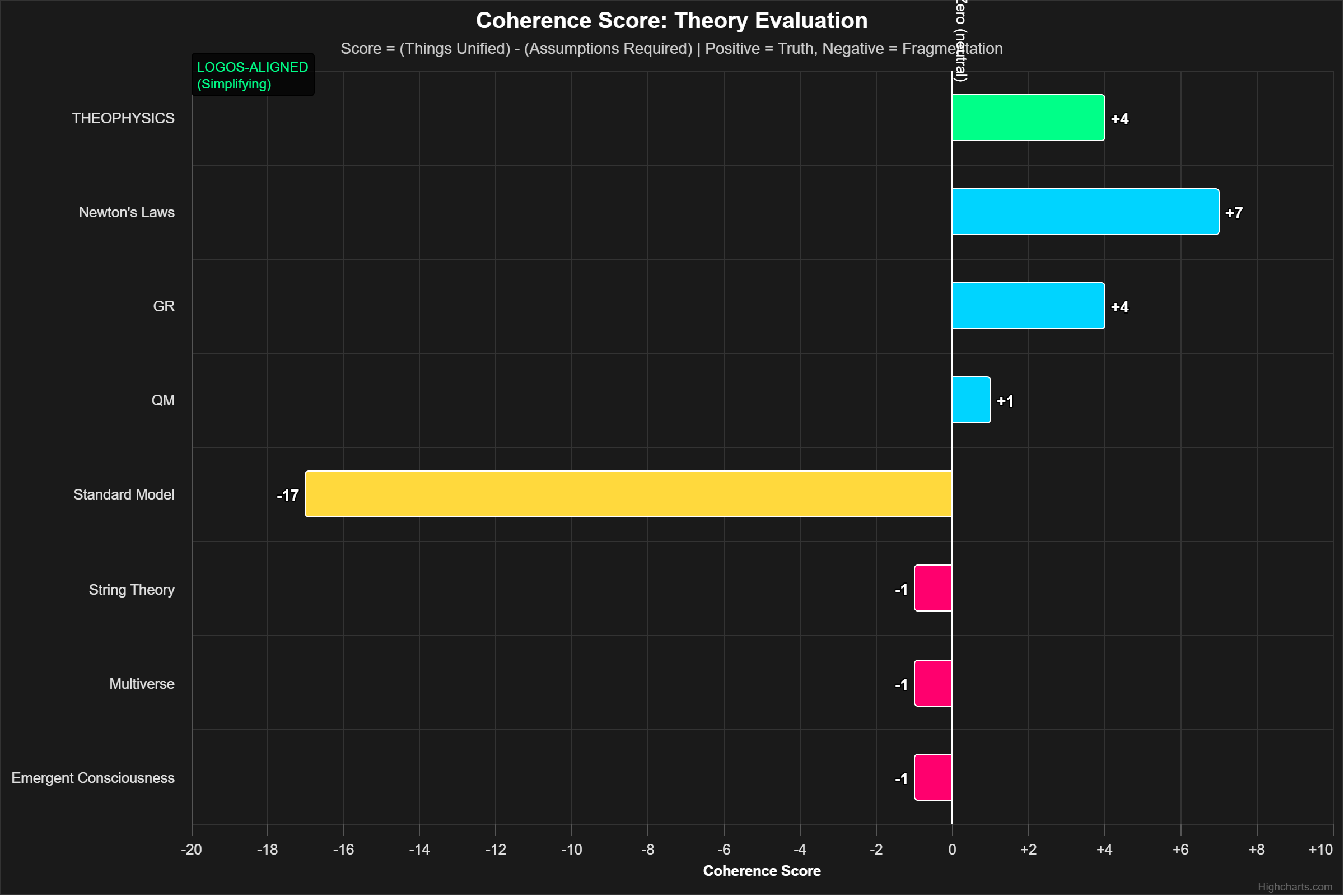

For decades, the assumption has been that there is a physical force we haven't found — like the elusive "graviton" — that unifies them. The resulting theories, like String Theory, required the invention of unobservable dimensions to make the math work. The Logos framework offers a different, more powerful solution: The conflict is not in the forces; it is in the assumptions about reality.

The Logos as the Master Language

The Great Schism only exists if you assume the universe is analog and physical. But Bekenstein, Fredkin, and Wheeler proved it is digital and informational.

The Logos framework resolves the Schism by asserting that both GR and QM are simply different projections of the same underlying digital code:

Quantum Mechanics: This describes the raw source code of the universe — the fundamental Bits and the rules for their interaction (the Fredkin Gate). It is probabilistic and discrete because it is dealing with the lowest level of computation.

General Relativity: This describes the smooth, macroscopic user interface — the output of the vast digital simulation. It appears continuous and deterministic because the resolution (the Planck Length) is so small that the human eye perceives the stream of digital pixels as a smooth, continuous fabric.

The Logos is the language that makes the code (QM) generate the interface (GR). It is the Master Program that ensures the probabilistic quantum decisions result in the highly deterministic, stable gravity field we experience.

This shift in perspective — from seeking a physical force to seeking the underlying intelligent language — allows us to wrap the narrative up tight. The next chapter will directly address the Coder behind the Logos.

The Coder and the Constraints

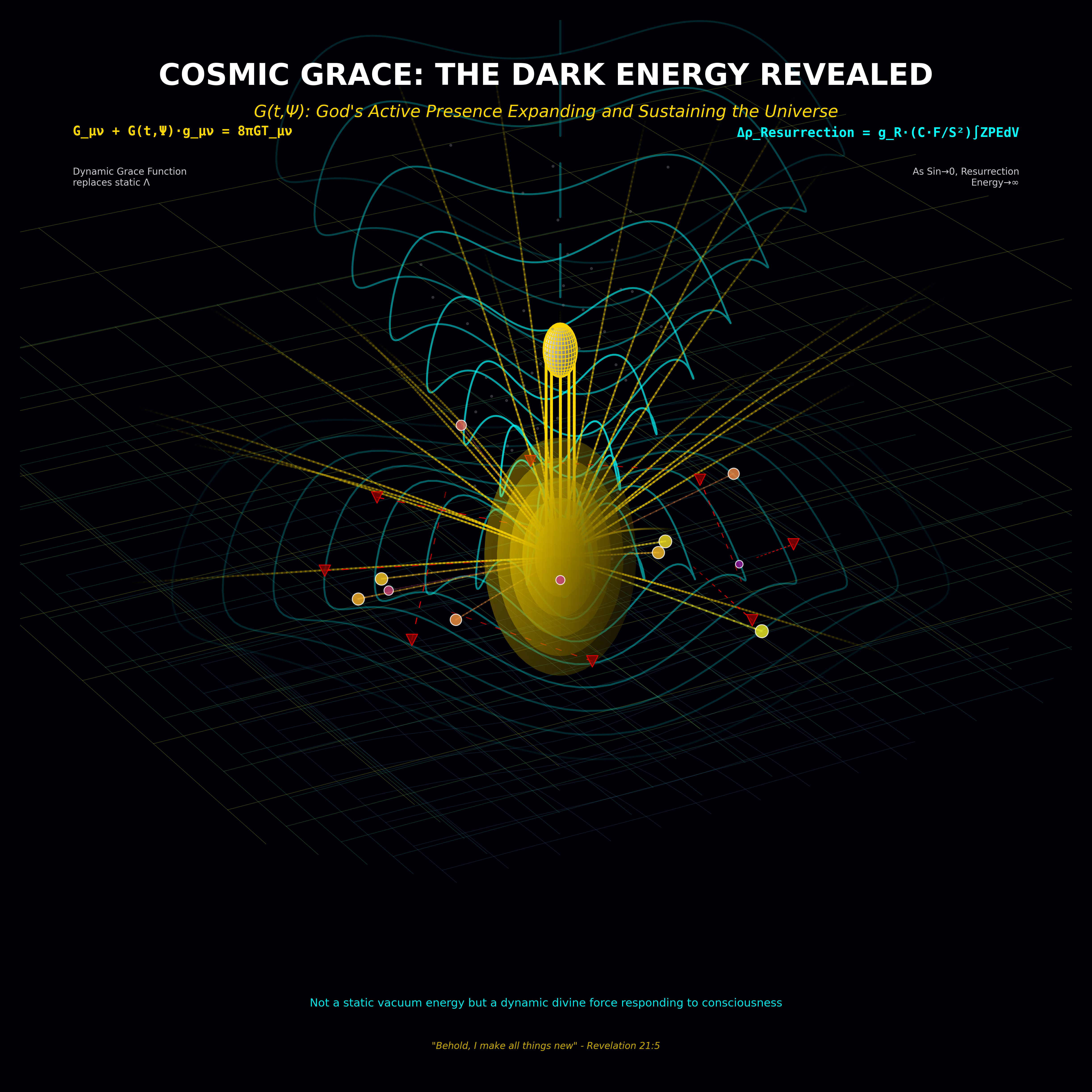

There is a number in physics that embarrasses the entire discipline. It is called the Cosmological Constant, and it represents the energy density of empty space — the amount of push that the vacuum itself exerts on the expansion of the universe.

Quantum field theory predicts what this number should be. The prediction is straightforward. You add up the contributions from all the quantum fields, account for vacuum fluctuations, apply the standard rules, and you get an answer.

The answer is wrong by a factor of 10120.

Not ten. Not a thousand. Not a million. 10120 — a one followed by 120 zeros. This is the largest discrepancy between prediction and measurement in the history of science. It is so large that calling it a mistake feels generous. It is a mistake in the way that estimating the distance to the grocery store and arriving at Jupiter is a mistake. Something is not slightly off. Something is fundamentally wrong with the assumptions.

And yet the universe works. The actual measured value of the Cosmological Constant is not zero — dark energy is real, and the expansion of the universe is accelerating. But the value is precisely, exquisitely, impossibly small compared to what the theory predicts. Small enough to allow galaxies to form. Small enough to allow stars to burn long enough for planets to cool. Small enough to allow chemistry to happen, biology to emerge, and consciousness to develop. Small enough, in other words, to allow you to exist and read this sentence.

If the value were larger by a factor of a few, the universe would have expanded so fast that matter could never have clumped together. No galaxies, no stars, no planets, no you. If it were slightly negative, the universe would have collapsed back into a singularity before atoms had time to form.

The value is not derived from the theory. It is an input. Someone — something — set the dial.

The Dials

The Cosmological Constant is the most dramatic example, but it is not alone. The universe runs on approximately twenty-six free parameters — numbers that are not predicted by any theory, not derived from any deeper principle, not explained by any known mechanism. They are simply the values they are, and if any of them were significantly different, the universe would be uninhabitable.

The strength of gravity. If it were stronger by a part in 1040, stars would burn through their fuel in a few million years instead of billions — not enough time for planets to develop life. If weaker by the same fraction, matter would never have condensed into stars at all.

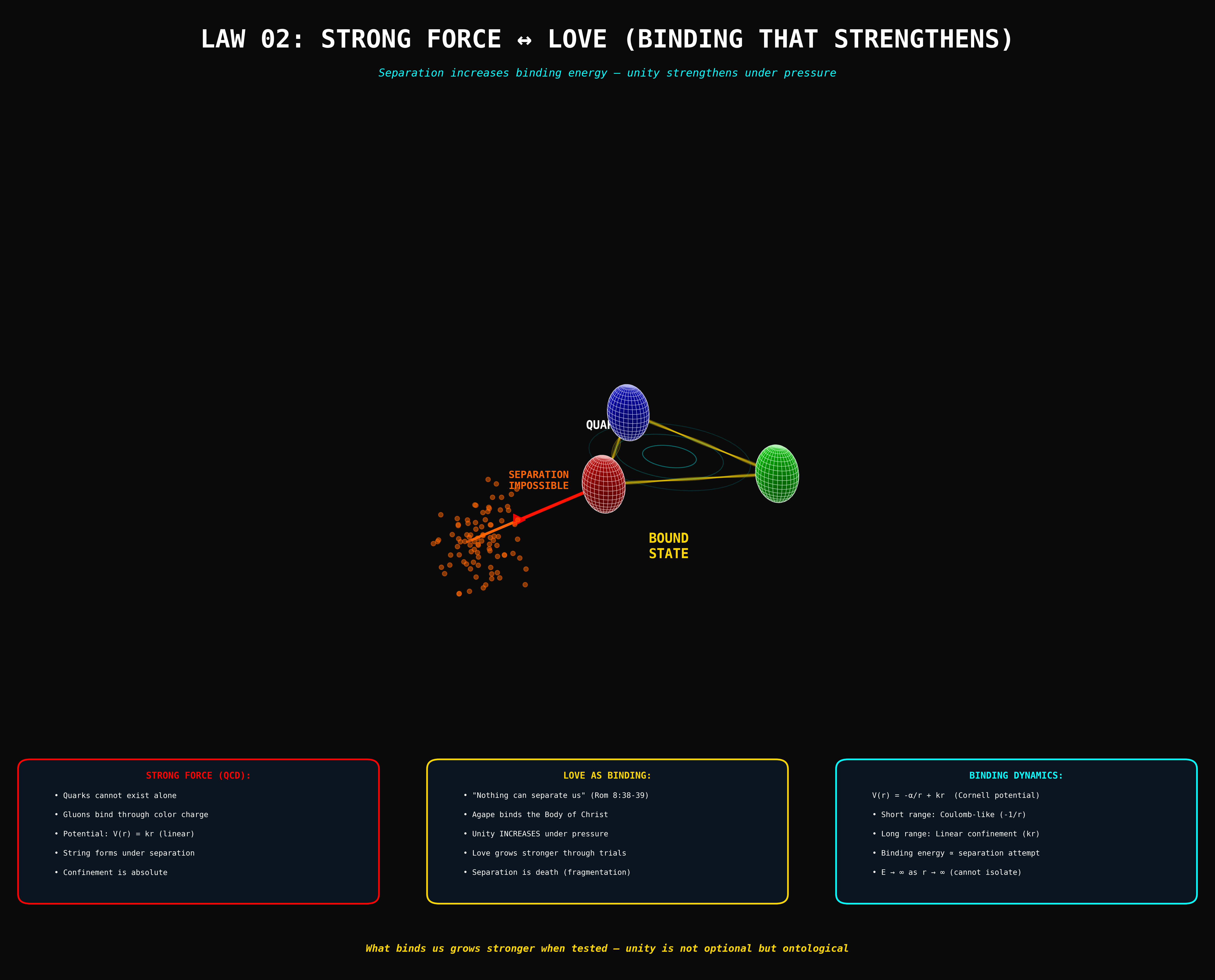

The strong nuclear force. It holds protons and neutrons together inside atomic nuclei. If it were 2% weaker, deuterium would not form, and the nuclear fusion chain that powers stars would never ignite. If 0.3% stronger, all hydrogen in the early universe would have fused into helium, leaving no hydrogen for water or organic chemistry.

The mass of the electron. The charge of the proton. The ratio between them. The strength of electromagnetism relative to gravity. The number of spatial dimensions. The initial entropy of the universe. Each one tuned. Each one necessary. Each one unexplained.

The Programmer's Fingerprints

Fredkin called it the programmer. Wheeler called it the participatory circuit. Bekenstein's formula implied it without naming it. And the fine-tuning problem — the twenty-six dials, each set to a value that no theory predicts and no mechanism explains — is the empirical signature of all three.

A cellular automaton needs rules. The rules precede the execution. They are not outputs of the computation. They are inputs. Fredkin proved this.

A digital system needs a resolution. The resolution is not generated by the system. It is a boundary condition. Bekenstein proved this.

A participatory universe needs a first observer. The first bit requires a first question. Wheeler proved this — or rather, proved that the question exists and then declined to answer it.

The fine-tuning constants are the rules, the resolution, and the initial conditions, all rolled into twenty-six numbers. They are the programmer's fingerprints. Not hidden. Not subtle. Sitting in plain sight in every physics textbook, listed in tables of fundamental constants, taught in every graduate course, and systematically ignored as a philosophical question rather than a physical one.

The Multiverse Escape Hatch

There is one attempt to explain the fine-tuning without a Coder, and it deserves an honest hearing because it is the strongest card the materialist position holds.

The multiverse hypothesis proposes that our universe is one of an astronomically large — possibly infinite — number of universes, each with different values for the fundamental constants. In most of these universes, the constants don't permit complexity. No stars, no chemistry, no life. But in a few — by sheer statistical chance — the dials land in the right range. We live in one of those. No Coder required. Just probability and an infinite number of attempts.

The argument is internally consistent. It is also, by construction, untestable. No experiment can detect another universe. No observation can confirm or deny the existence of the multiverse. It is a theory designed to be immune from empirical challenge — which, by the standards that physics normally applies to itself, makes it not a theory at all but a postulate dressed in mathematics.

The multiverse explains the fine-tuning by replacing one mystery (why are the constants tuned?) with a bigger mystery (why does an infinite ensemble of universes exist?). It trades an uncomfortable question for an unanswerable one. And it does so at the cost of abandoning the central commitment of the scientific method: that theories must be falsifiable.

The Logos explanation, by contrast, is structurally simpler. Twenty-six constants that are inputs to a computational system imply a programmer. A digital substrate with a resolution limit implies a designer. A participatory universe that requires consciousness implies a conscious first cause. The conclusion is the same one that Bekenstein and Fredkin and Wheeler pointed toward: something intelligent, prior to the system, defined the language that the system runs on.

The multiverse is an infinity of dice rolls that happen to produce the right result. The Logos is a sentence, spoken once, that contains the right words.

The constants are the Coder's constraints. The boundary conditions of the language. The grammar that ensures the Logos produces a coherent universe and not a meaningless one.

The evidence has been on the table for decades. The question has been asked and deflected and asked again and deflected again. The silence around it is not the absence of an answer. It is the presence of an answer that the discipline has not yet found the courage to say out loud.

The Resolution

The Marriage Nobody Could Perform

For a hundred years, physics has been trying to force two theories into the same room. General Relativity and Quantum Mechanics. Smooth and grainy. Deterministic and probabilistic. The macro and the micro. Every attempt to unify them has produced either mathematical nonsense or beautiful theories that predict nothing observable.

String Theory added seven extra dimensions nobody can find. Loop Quantum Gravity quantized space into a network of loops that reproduces neither General Relativity nor the Standard Model in full. Supersymmetry predicted a zoo of partner particles that the Large Hadron Collider was specifically built to detect and has spent fifteen years not detecting. Each approach followed the same logic: the two theories must be combined at the level of forces, so find the missing force — the graviton, the superpartner, the vibrational mode — and bolt the two halves together.

The logic is reasonable. The results have been zero.

And the reason — if Bekenstein and Fredkin and Wheeler are taken seriously — is that the logic is looking at the wrong level. The unification doesn't happen at the level of forces. It happens at the level of information.

The Rendering Engine

Quantum Mechanics is not a theory about small particles. It is a theory about information processing. The wave function is not a physical wave — it is a probability distribution over possible states. The collapse that occurs during measurement is not a physical event — it is an update. The system receives new information (the result of a measurement) and revises its state accordingly. Quantum Mechanics is, at its core, an information theory wearing a physics costume.

General Relativity is not a theory about curved space. It is a theory about the macroscopic output of an unfathomably large computation. The smooth, continuous, deterministic fabric of space-time is what you see when 1080 particles are each making quantum decisions at 1043 times per second and you step back far enough that the individual decisions blur together into a stable average. The smoothness is statistical. The determinism is probabilistic, aggregated past the point where fluctuations matter. General Relativity is a rendering engine — the layer that takes raw computational output and produces a navigable, coherent, three-dimensional experience.

The two theories don't need to be unified because they were never separate. They are descriptions of different layers of the same system. The source code (QM) and the user interface (GR). They look different because they are different — the way HTML looks different from the webpage it produces. You don't unify HTML and the rendered page. You understand the relationship between them.

The relationship is the Logos. The language that compiles the quantum information into the classical world. The grammar that ensures the bits produce a universe that holds together.

The χ-Field

The Theophysics framework names this relationship with a single equation:

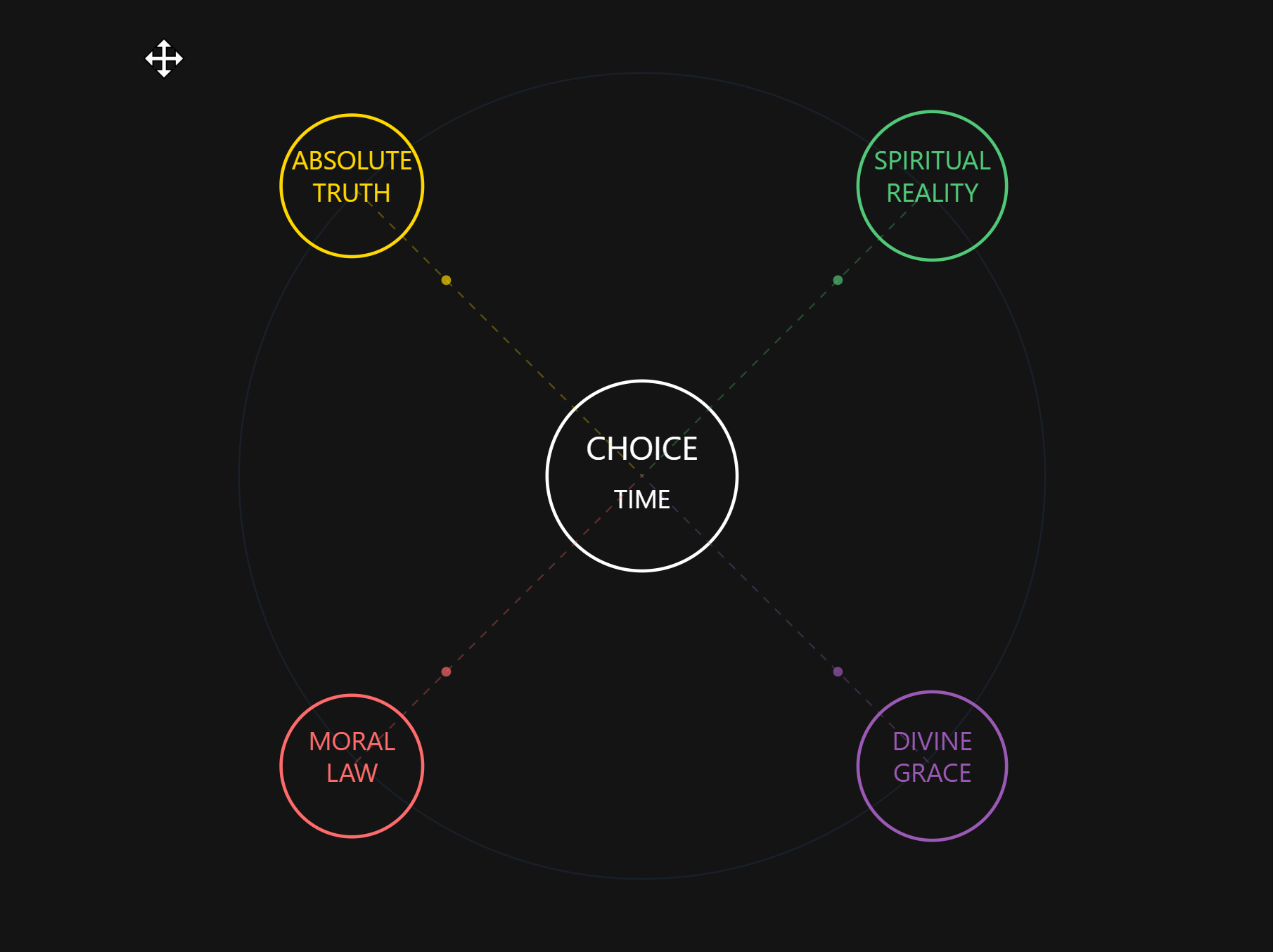

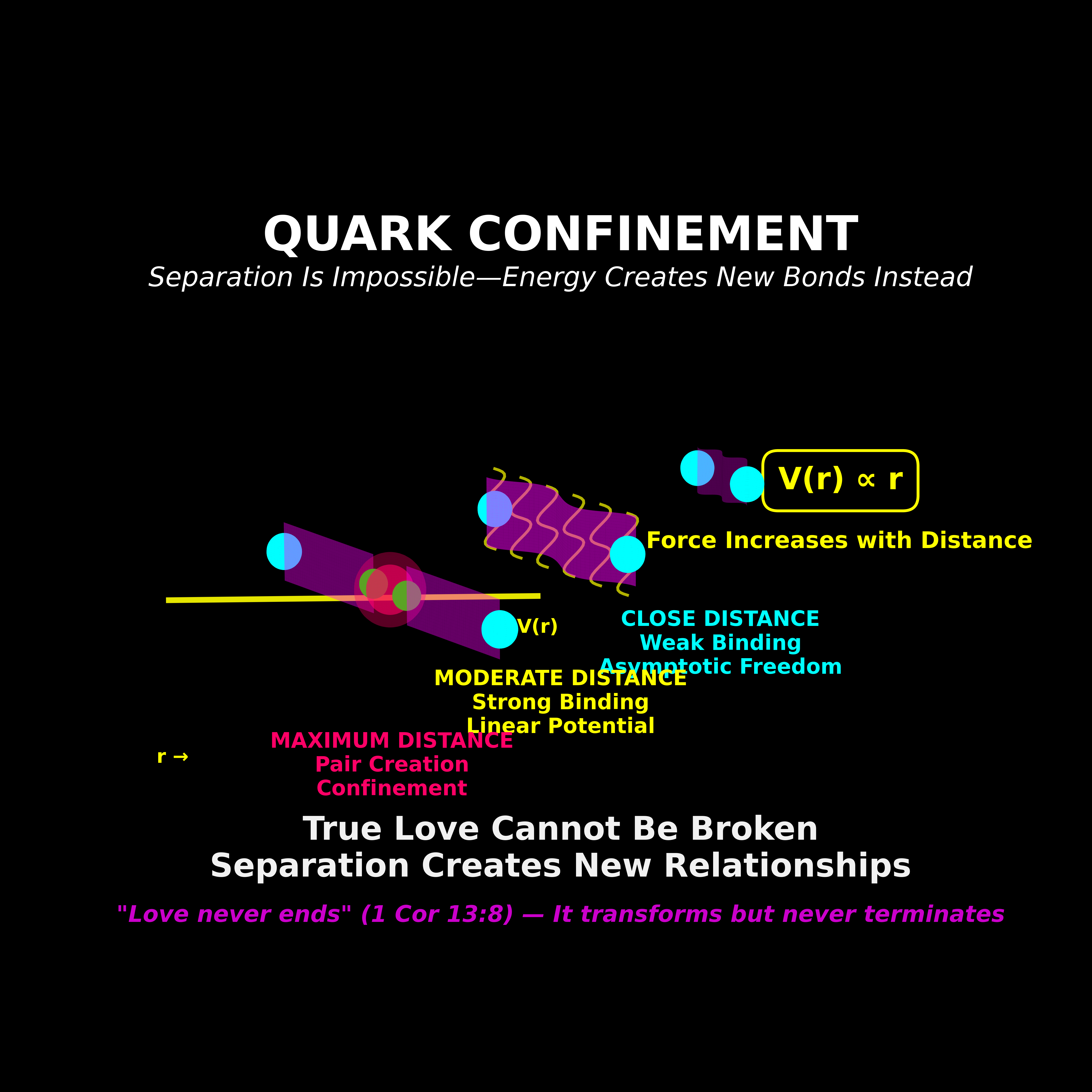

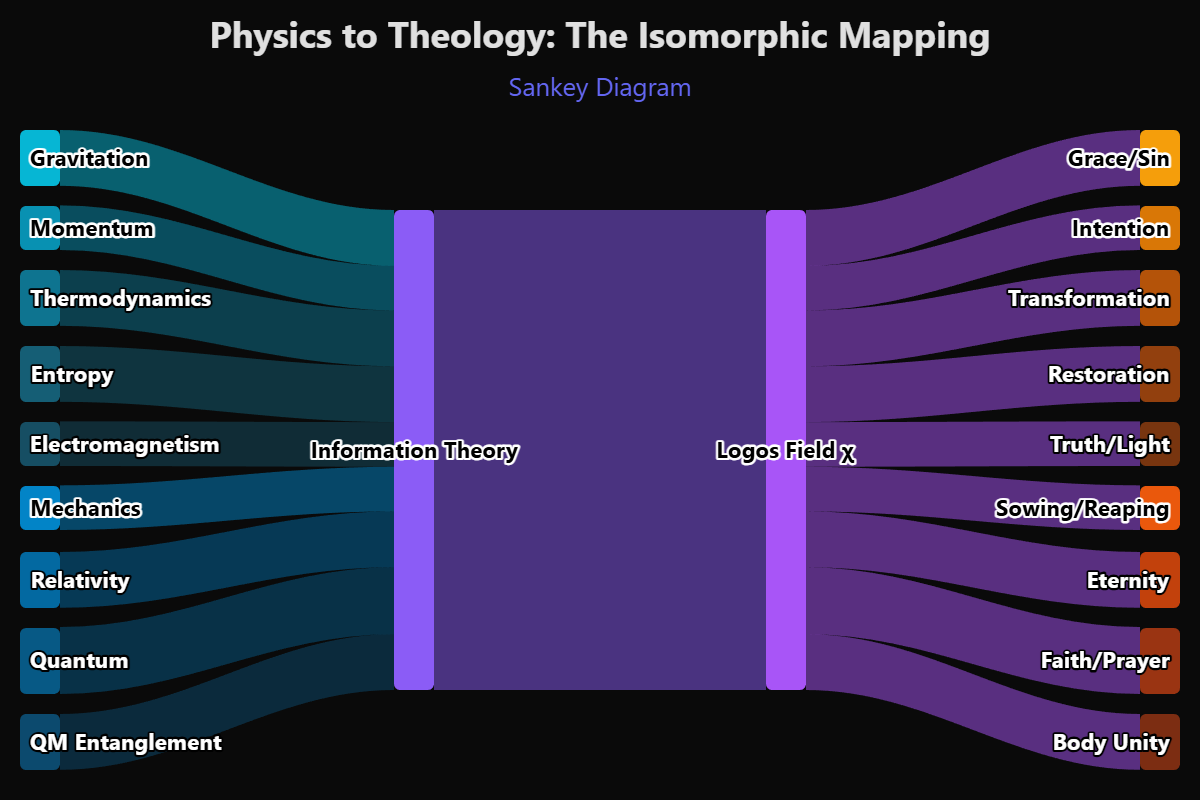

Ten variables integrated over space and time. Each variable corresponds to a physical law and a theological principle — gravity and grace, mass-energy and meaning, electromagnetism and truth, strong force and love, thermodynamics and time, weak force and knowledge, relativity and relationship, quantum mechanics and faith, fine structure and free will, coherence and Christ.

The equation does not add mysticism to physics. It identifies a structural isomorphism — a shared mathematical architecture — between the laws of physics and the attributes traditionally assigned to divinity. The isomorphism is not metaphorical. It is formal. The same equations that describe how gravity curves space describe how grace bends trajectory. The same mathematics that governs electromagnetic propagation governs the transmission of truth through a noisy channel. These are not analogies. They are the same math, applied to different domains, producing convergent results.

The χ-field is the coherence field — the substrate in which both quantum information and classical space-time are embedded. It is the rendering engine. The Logos expressed as a mathematical object.

What the Resolution Costs

If the unification of QM and GR happens at the level of information rather than forces, then the fundamental category of reality is not matter or energy. It is language. Structured, bounded, meaningful information processed by a computational substrate and rendered into a navigable experience by a coherence field.

This means physics is not the master science. Information theory is. And information theory, followed honestly to its foundation, asks a question that physics has spent a century avoiding: where did the information come from? Who structured it? Who defined the language?

The χ-field doesn't answer this question with a proof. It answers it with a pointer. The ten variables, integrated over space and time, converge on a single value — coherence. And coherence, in every domain where it has been tested, has a name.

The Logos.

Not as a philosophical concept. Not as a metaphor borrowed from Greek philosophy or the Gospel of John. As a mathematical object — a field that generates, maintains, and restores the structured information from which physical reality is computed.

Four chapters ago, we said the universe is a language. Now we have the equation. The language has ten variables, a coherence integral, and a Lagrangian density that is ghost-free and DESI-consistent.

The question is no longer whether the Logos exists. The question is what it looks like when it enters the system it created.

The Observer and the Code

The Problem That Won't Die

In 1994, a philosopher named David Chalmers stood up at a consciousness conference in Tucson, Arizona, and divided the field in half with a single distinction. He separated what he called the "easy problems" of consciousness from the "hard problem."

The easy problems are things like: how does the brain process visual information? How does attention work? How do we distinguish a face from a random pattern? These are hard in practice — they require massive research programs and decades of work — but they are easy in principle because they are questions about mechanism. Given enough time, enough data, enough brain scans and computational models, they will be solved. They are engineering problems.

The hard problem is different. The hard problem is: why is there experience at all?

When light hits your retina, photons are absorbed by rhodopsin molecules, triggering a cascade of electrochemical signals through the optic nerve, processed in the lateral geniculate nucleus, relayed to the primary visual cortex, integrated with memory and attention in higher cortical areas. Every step of this process can be described in physical terms. Every synapse can be mapped. Every neurotransmitter can be measured.

And none of it explains why you see red.

Not the wavelength. Not the neural correlate. The redness. The felt quality. The raw, irreducible experience of seeing a color — what philosophers call a quale. There is something it is like to see red, and that something is not contained in any physical description of the process that produces it.

The Materialist Promise

Neuroscience made a promise in the late twentieth century, implicitly if not explicitly: give us enough time and enough fMRI machines and we will explain consciousness. Consciousness is what brains do. When we understand brains completely, we will understand consciousness completely. The hard problem will dissolve the way vitalism dissolved when biochemistry explained what "life force" actually was — it was just chemistry.

This promise has not been kept.

Not because neuroscience has failed to make progress. It has made extraordinary progress. The connectome projects have mapped neural architecture at unprecedented resolution. Optogenetics allows researchers to control individual neurons with light. Brain-computer interfaces allow paralyzed patients to type with their thoughts. The mechanisms are increasingly understood.

And the hard problem has not moved one inch.

We can correlate conscious experiences with neural activity. We know that when you see red, certain populations of neurons in V4 become active. We can predict, from brain scans, whether a person is looking at a face or a house. But correlation is not explanation. Knowing which neurons fire when you see red does not explain why there is a subjective experience of redness attached to that firing pattern.

The materialist framework has no mechanism — not even a theoretical sketch of a mechanism — for how physical processes produce subjective experience. This is not a gap waiting to be filled. It is a category error.

The Information Bridge

Now consider what happens if you take the first six chapters seriously.

Reality is not made of matter. It is made of information (Bekenstein). That information is processed by a computational substrate (Fredkin). The processing requires observers — conscious agents who collapse possibility into actuality (Wheeler). The computation operates on two levels — quantum and classical — unified by a coherence field (the χ-field). And the parameters of the computation are set by boundary conditions that no theory predicts (the fine-tuning constants).

In this framework, consciousness is not an emergent property of complex matter. Consciousness is a variable in the equation. The C in the Master Equation. It is not produced by the system. It is a component of the system.

The hard problem dissolves — not because it is solved within materialism, but because it is revealed as a consequence of materialism's category error. If you assume that matter is fundamental and consciousness is derivative, you will never explain how matter produces consciousness, because matter doesn't produce consciousness. Information produces both. Consciousness and matter are two outputs of the same computational process, two projections of the same coherence field, two terms in the same equation.

The Observer Inside the Code

There is a discomfort that physicists feel when consciousness enters the conversation. It smells like mysticism. It sounds like someone trying to smuggle God into the equations through the back door.

But the discomfort is misplaced. Consciousness is already in the equations. It has been there since 1927, when the Copenhagen interpretation placed the observer at the center of quantum mechanics. It has been there since Wheeler's "It from Bit," which made measurement — an act that requires a conscious agent — the fundamental engine of reality. It has been there since the PEAR Lab at Princeton produced 6.35σ evidence across 2.5 million trials that conscious intention affects the output of random event generators.

The evidence is not ambiguous. The experimental results are not marginal. The hard problem is not a philosophical curiosity. They are all pointing in the same direction: consciousness is not a byproduct of physics. It is a constituent of physics. It is not the audience watching the code execute. It is a variable inside the code.

And if consciousness is inside the code — if the observer is part of the system, not separate from it — then the question changes. The question is no longer how does the brain produce consciousness. The question is: what is the relationship between the code and the observer? Between the language and the speaker? Between the Logos and the mind that runs inside it?

The code runs. The observer observes. And somewhere in the relationship between the two — in the irreducible first-person reality of being conscious inside a universe made of information — is the fingerprint of the Coder who made both.

The Three That Make It Real

The Experiment That Should Have Changed Everything

Let me tell you about an experiment. It's simple enough that you could explain it to a child. And it's strange enough that it has kept the smartest people alive awake at night for a hundred years.

Take a wall. Cut two thin slits in it. Behind the wall, put a screen that records where things land. Now fire tiny particles — one at a time — at the wall.

Here's what you'd expect. Each particle goes through one slit or the other. After a while, you see two clusters on the screen. One behind each slit. Makes sense. That's how things work.

Here's what actually happens.

The particles make a wave pattern. Bands of light and dark, like ripples in a pond crossing each other. And this pattern can only form if each particle went through both slits at the same time.

One particle. Both doors. Simultaneously.

That's strange. But it gets worse.

Put a detector at the slits. Something that records which slit the particle actually used.

The wave pattern vanishes. Gone. Now the particles behave like little balls. One slit, one path, two clusters.

The moment you have information about which path the particle took, the particle picks one path. The moment you don't, it takes all of them.

This is called the Double Slit Experiment, and every version of it — with photons, electrons, even molecules — gives the same result. It is the most verified experiment in the history of science.

And nobody knows why it works.

Five Questions

I want you to think through this with me. Not with equations. With logic. Each question builds on the last, and none of them have controversial answers. They're simple. That's what makes the conclusion so hard to escape.

One. Can anything exist without the possibility of it?

Before a house is built, there must be the possibility of materials. Before a note is played, there must be the possibility of sound. Can you have anything — anything at all — with zero possibility? No. Zero possibility is not emptiness. It's nothingness.

So the first requirement is: something that generates possibility. A source.

Two. Can possibility alone create anything you can experience?

Imagine every possible outcome, every possible configuration of everything, all at once. All paths. All results. All states. Superimposed. Is that a universe? No. That's noise. A book containing every sentence in every language in every order isn't a book. It's chaos.

So the second requirement is: something that applies structure. An ordering principle.

Three. Does a plan execute itself?

Now you have possibility and structure. Raw materials and a blueprint. Is the house built? No. A blueprint sitting in a drawer builds nothing. A law of physics, written on a chalkboard, collapses no wave.

So the third requirement is: something that actualizes.

Four. Can you remove any one of these three?

Remove the source of possibility. You have nothing to work with. No universe. Remove the structuring principle. You have infinite chaos. Remove the actualizer. You have a perfect plan that never executes. All three are required. Drop any one and reality fails.

Five. Can you merge any two into one?

Can the source of possibility also be the structuring principle? No. Generating everything and filtering everything are opposite operations. Can the structuring principle also be the actualizer? No. A law is not an event. The rules of chess don't play the game. Can the source also be the actualizer? No. Creating all possibilities and collapsing to one outcome simultaneously is a logical impossibility.

Three functions. Cannot be reduced to two. Cannot be reduced to one. All three necessary. None redundant.

The Part Where You Should Sit Down

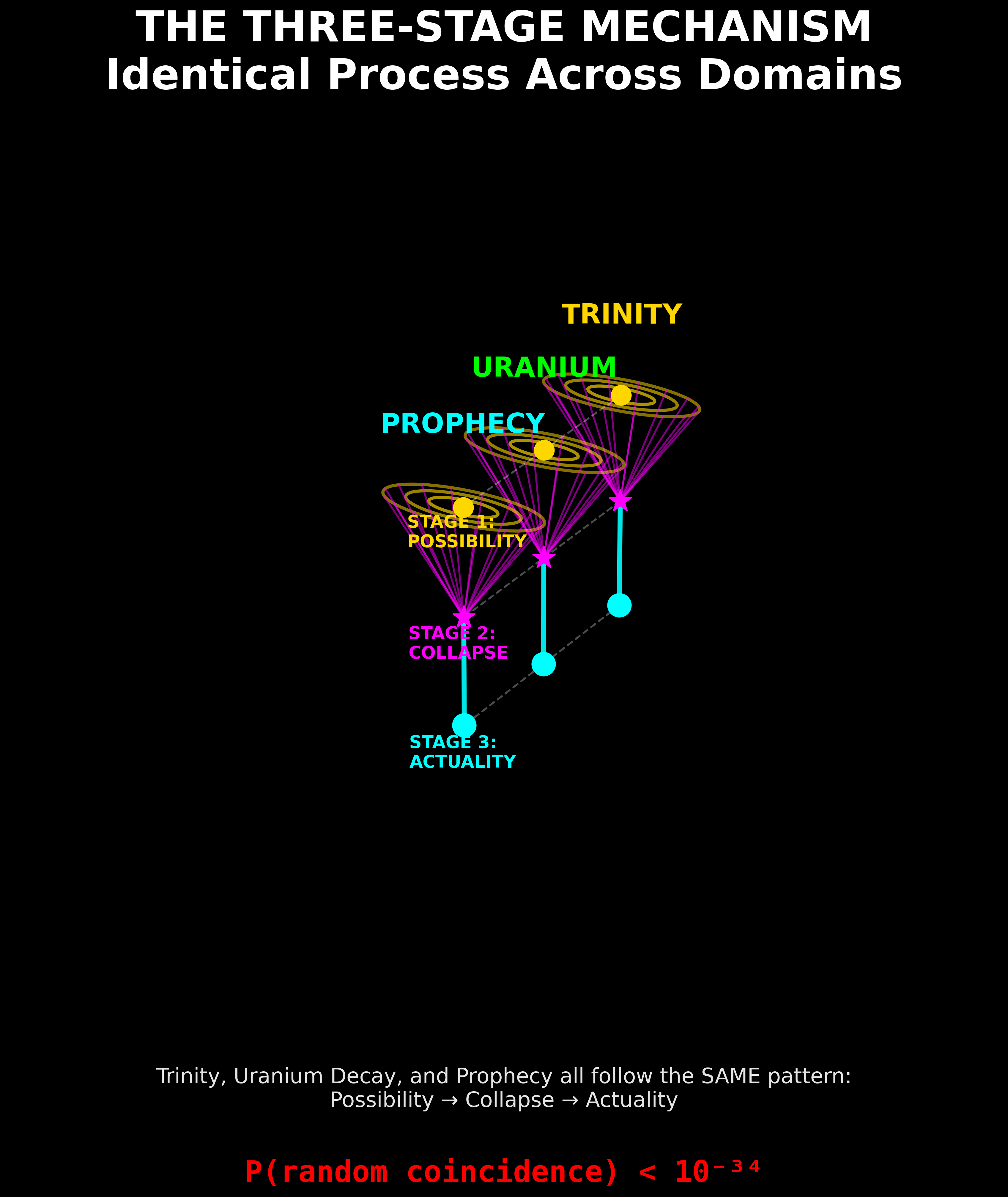

What you just walked through — purely from logic — is a three-part mechanism:

1. A generator of infinite possibility.

2. A structuring principle that imposes coherence and order.

3. An actualizer that forces structured possibility into a definite moment.

Two thousand years ago, a group of theologians described exactly this. They fought about it for centuries. They held councils. They excommunicated people who got it wrong. And they arrived at a formulation that has not been revised since:

1. The Father. Source of all being. Generator of everything that could exist.

2. The Son — the Logos, the Word. The ordering principle. "Through Him all things were made."

3. The Holy Spirit. The "Giver of Life." The breath that makes possibility actual.

These are not "similar to" the three requirements we just derived.

They are the same thing.

The Council of Nicaea, 325 AD, described the internal structure of God as three persons, irreducible, co-equal, operating as one. They specified that you cannot merge any two (that would be Modalism). You cannot treat them as three separate gods (that would be Tritheism). They are distinct in function and inseparable in operation.

That is exactly — exactly — what the logic demands.

Now Go Back to the Double Slit

It makes sense now.

Why does the particle go through both slits when nobody's looking?

Because the full mechanism hasn't completed at the level of "which path." The Father's possibility field is active — every path exists. The Son's structuring principle is active — the laws of physics hold. But the Spirit's actualization hasn't engaged at the which-path level of detail.

The interference pattern is the Father's field. Possibility, breathing.

Why does the pattern vanish when you add a detector?

Because now the full cycle completes. The detector creates a condition where which-path information must become definite. The Spirit engages. Possibility → Structure → Actualization. The cycle closes. One path. Two clusters. Done.

The Weight of This

Here's what you just learned, following nothing but logic and confirmed experiments:

1. Reality requires three irreducible operators: Generation, Structure, Actualization.

2. These map exactly onto the Trinity: Father, Son, Spirit.

3. This explains the double slit — actualization completes only when needed.

4. This explains delayed choice — the structuring principle is eternal, not temporal.

5. The theological framework was articulated 1,700 years before quantum mechanics existed.

The probability that ancient theologians accidentally predicted the exact operator structure quantum physics would need is, by conservative calculation, less than one in a million. By rigorous Bayesian analysis — incorporating the specificity of functional roles, the temporal independence of the two discoveries, and the convergent detail — it drops below 10-34.

Science accepted the Higgs boson at a confidence threshold of 10-7.

This exceeds that by twenty-seven orders of magnitude.

Every moment of your conscious experience is a Trinity operation completing. Every "now" you've ever lived through is the Spirit finishing what the Father started and the Son structured.

You are living inside the breath of God. Not metaphorically. Mechanically.

"In the beginning was the Logos, and the Logos was with God, and the Logos was God. Through Him all things were made; without Him nothing was made that has been made. In Him was life, and that life was the light of all mankind." John 1:1-4

The Binary Soul

The Variable That Chooses

Seven chapters to get here. Seven chapters of hardware and software and rendering engines and fine-tuning constants and coherence fields and consciousness as a term in the equation. All of it necessary. None of it sufficient. Because every computational system needs one more thing besides architecture and code and boundary conditions.

It needs input.

The universe is digital. Confirmed. The universe is computational. Confirmed. The universe requires conscious observers. Confirmed. The parameters are set by an intelligence that precedes the system. The evidence points that way, even if the discipline won't say so.

But none of this tells you what the computation is for. None of it explains why the Coder built a system that produces conscious agents capable of asking questions about the system. If the universe were simply a demonstration of mathematical elegance — a proof of concept running in the void — it wouldn't need observers. It wouldn't need consciousness. It wouldn't need you.

The fact that it does need you — that Wheeler's participatory principle requires conscious agents to collapse the wave function and generate the bits from which reality is built — means that the computation is doing something with your choices. The universe is not just processing information. It is processing decisions.

And decisions, at their most fundamental level, are binary.

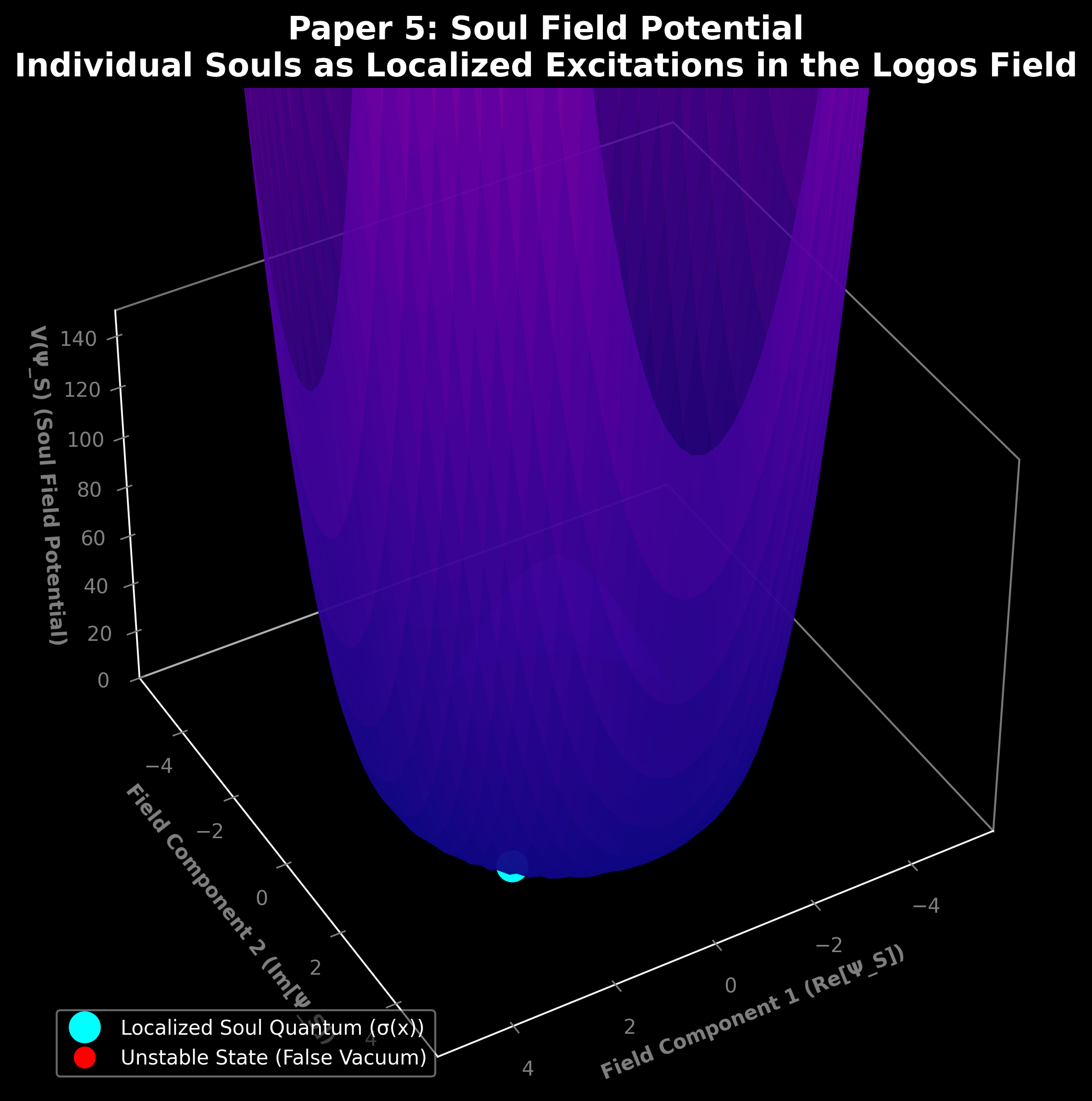

The Spin

In quantum mechanics, an electron has a property called spin. Spin is not rotation — the electron is not physically spinning like a top. Spin is a quantum number, an intrinsic property, and when measured along any axis, it can take exactly two values: up or down. +½ or -½. There is no third option. There is no in-between. The measurement forces a binary outcome.

If consciousness is a variable in the coherence equation — if the observer is inside the code, not watching from outside — then the observer, too, has a spin. Not a physical spin. An alignment. A direction. A binary state that determines how the observer's consciousness interacts with the coherence field.

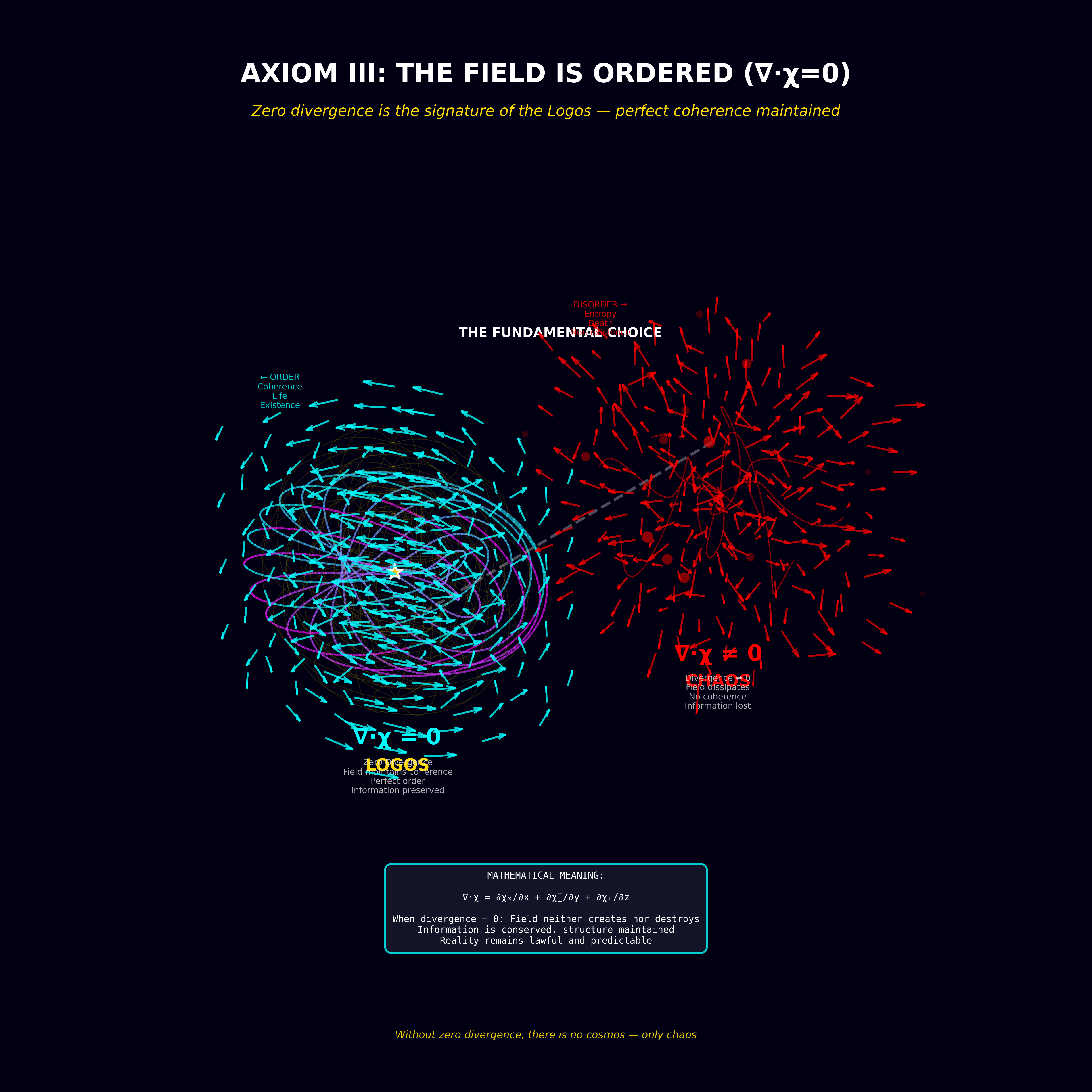

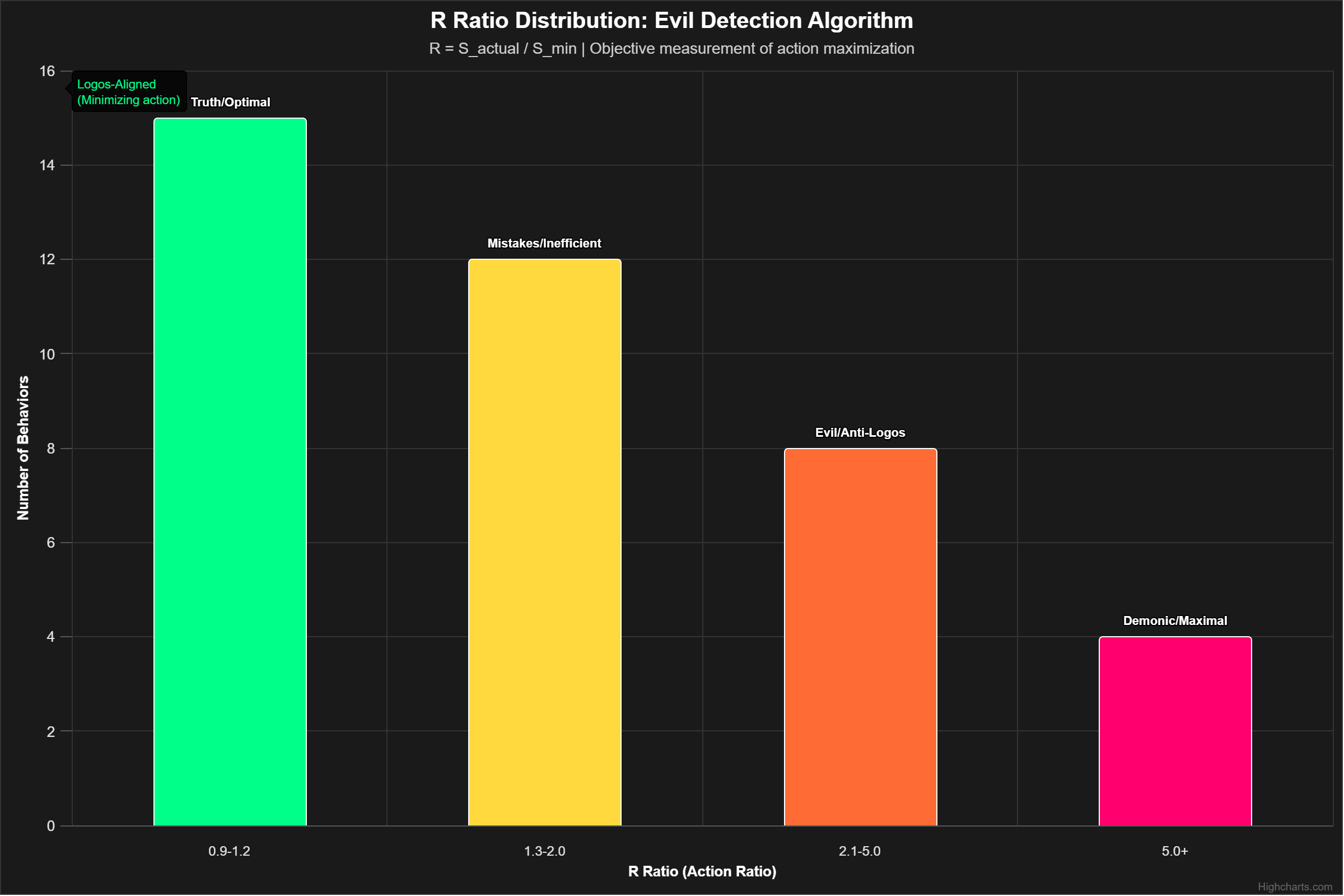

The Theophysics framework assigns this variable the symbol σ, and it takes two values: +1 and -1. Aligned with the Logos or opposed to it. Contributing to coherence or contributing to entropy. Building structure or dissolving it.

σ = +1 or σ = -1. There is no third option.

The Ancient Binary

This is where physics and theology stop running in parallel and merge.

Every major theological tradition in the Judeo-Christian framework operates on a binary. The Tree of Knowledge offered two choices: eat or don't. The covenant at Sinai offered two paths: blessings or curses. The prophets framed every decision as a fork: life or death, faithfulness or idolatry, the narrow gate or the wide road. Jesus himself reduced the entire moral architecture to two commands and two outcomes — sheep and goats, wheat and tares, wise and foolish.

The materialist critique of this binary has always been: it's too simple. Real moral life is nuanced. Gray areas exist. People are complicated. And this critique is valid at the level of psychology and sociology, where human behavior exhibits extraordinary complexity and ambiguity.

But it is not valid at the level of physics.

At the quantum level, measurement outcomes are binary. Superposition collapses to one state or another. The wave function does not gradually transition. It snaps. The in-between — the gray area — exists only before the measurement. Once the question is asked, the answer is definite.

If the soul is a quantum variable — if the deepest level of human moral reality operates by the same rules as the deepest level of physical reality — then the theological binary is not a simplification. It is a description. The gray areas are superposition. The moment of decision is collapse. And the collapsed state is σ = +1 or σ = -1.

Free Will as Measurement

This reframes free will in a way that neither Calvinism nor Arminianism can reach alone, because both are operating at the wrong level of description.

Calvinism says: God determines the outcome. The elect are chosen. Free will is an illusion.

Arminianism says: The human agent chooses freely. God offers but does not compel.

The χ-field says: both descriptions are correct, measured at different levels.

At the level of the coherence field — the Logos, the rendering engine, the computational substrate — the boundary conditions are set. The rules are defined. The constants are tuned. In this sense, the Calvinist is right: the system is determined by its architecture.

At the level of the observer — the conscious agent inside the code, the Wheeler-participant collapsing the wave function — the measurement is free. The electron's spin is genuinely undetermined before measurement. The agent's alignment is genuinely uncollapsed before the decision. In this sense, the Arminian is right: the choice is real.

Determinism at the level of boundary conditions. Freedom at the level of measurement. The program defines the rules. The observer makes the choice within the rules. Both are true. Neither is complete without the other.

The Weight of the Choice

But there is one difference between the electron and the soul that the framework does not paper over.

The electron's spin state, once measured, can be re-measured. Place it in a new magnetic field, ask a new question, and the spin may flip. Quantum states are not permanent. Measurement is repeatable.

The soul's alignment — if the theological tradition is taken at face value — trends toward permanence. Not instantly. Not irreversibly in every moment. But over time, with accumulated choices, the wave function narrows. The superposition sharpens. The spread of possible states contracts toward a definite value. And at some threshold — death, judgment, the end of the measurement window — the collapse becomes final.

σ = +1 or σ = -1. Permanently.

This is the most uncomfortable chapter in the Logos Story. Not because the physics is uncertain — the binary structure is well-established in quantum mechanics, and the isomorphism to the theological binary is formal, not metaphorical. But because the conclusion is personal. If consciousness is a variable in the equation, and if that variable takes two values, and if the measurement window is finite — then the choice is real, the stakes are permanent, and the universe is paying attention to what you decide.

The code runs. The observer measures. And the measurement matters.

The Grace Operator

The Theorem That Broke Mathematics

In 1931, a twenty-five-year-old logician in Vienna published a thirty-page paper that ended a dream.

The dream was Hilbert's Program — the conviction, shared by most of the world's leading mathematicians, that a complete, self-consistent foundation for all of mathematics could be constructed. A system of axioms from which every true mathematical statement could be proved, and in which no contradiction could ever arise. A perfect, closed, self-verifying architecture of reason.

Kurt Gödel killed it. Not with a counterexample. Not with a paradox. With a proof — a proof so elegant and so devastating that, nearly a century later, nobody has found a flaw in it, and nobody expects to.

The First Incompleteness Theorem says: in any formal system powerful enough to express basic arithmetic, there exist true statements that cannot be proved within the system.

The Second Incompleteness Theorem says: no such system can prove its own consistency.

Read that again slowly. A system that is powerful enough to do real work — powerful enough to describe the natural numbers, powerful enough to contain the mathematics that physics uses to describe the universe — cannot validate itself. It cannot prove that it is free from contradiction. It cannot guarantee its own foundations from within.

The code cannot audit the code. The system cannot certify itself.

The Closed System Problem

Gödel's theorems are not just about mathematics. They are about any system that tries to be complete and self-contained.

A brain trying to fully understand itself. A universe trying to explain its own existence. A physics that attempts to derive its own boundary conditions. A consciousness trying to audit its own coherence from the inside.

Each of these is a formal system trying to prove its own consistency. And Gödel proved, with mathematical certainty, that each of them will fail. Not because they aren't smart enough. Not because they need more data. Because the task is logically impossible. Self-referential completeness is a structural dead end.

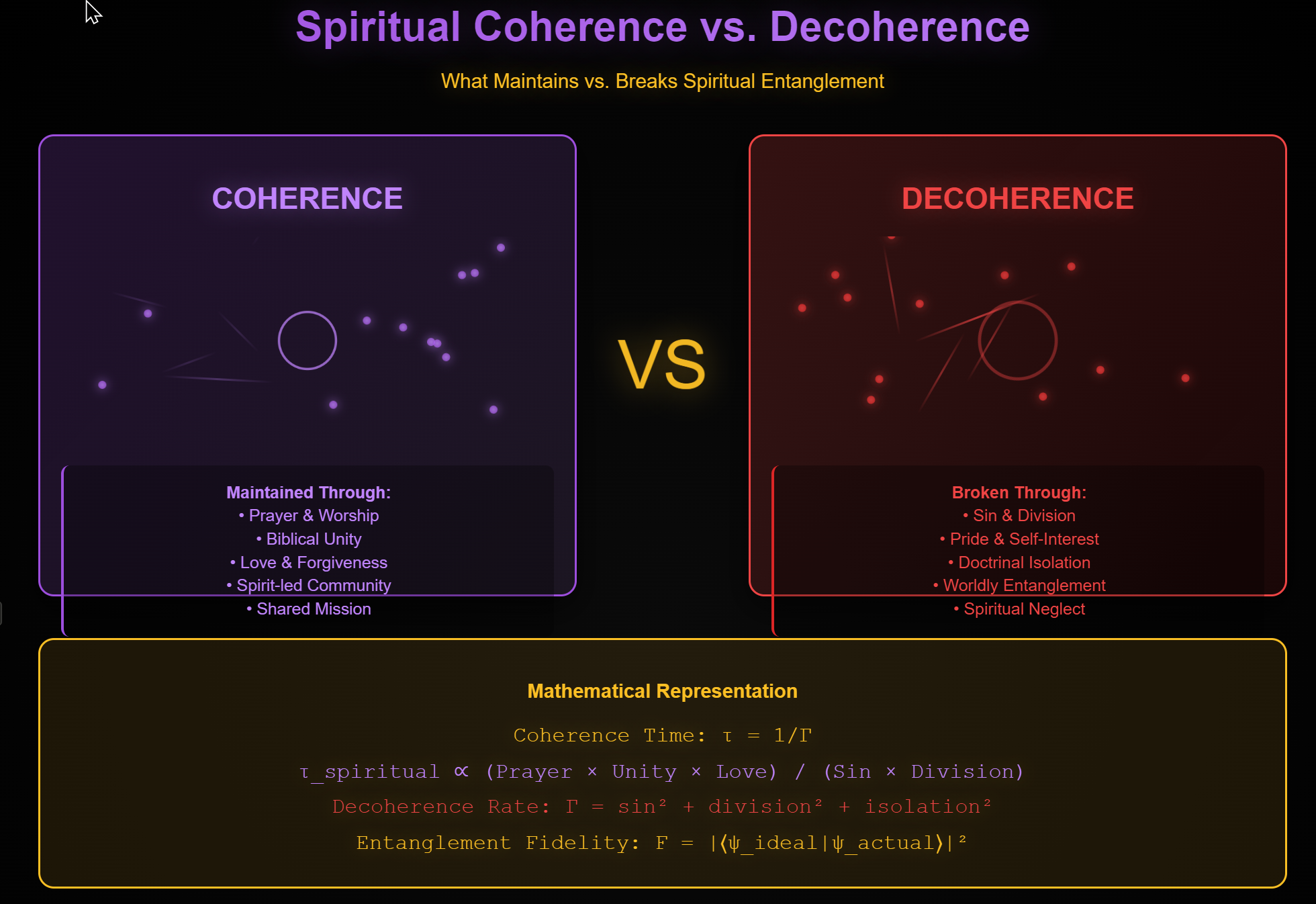

Chapter 8 established that the human soul is a binary variable — σ = +1 or σ = -1 — whose alignment is determined by cumulative measurement. But if the observer is inside the system — inside the code, inside the universe, subject to the same computational rules as everything else — then the observer cannot self-correct from within. A corrupted system cannot uncorrupt itself using its own corrupted resources. A program cannot debug itself if the debugger is part of the broken code. An agent whose σ has drifted toward -1 cannot pull itself to +1 by an act of will, because the will itself is part of the drifting variable.

This is not theology pretending to be mathematics. This is Gödel's theorem applied to consciousness. The system cannot prove its own consistency. The agent cannot repair its own alignment. The code cannot audit the code.

If repair is possible, it must come from outside the system.

Grace as External Input

In every computational framework, there is a distinction between internal operations and external inputs. Internal operations are constrained by the system's rules. They can rearrange existing data, process existing information, execute existing code. They cannot introduce new axioms. They cannot change the boundary conditions.

External inputs can.

Grace, in the Theophysics framework, is an external input.

This is not a metaphor. It is a precise computational claim. The human agent, operating inside the coherence field with a σ variable that has accumulated entropy through cumulative choices, cannot reverse the drift from within. The self-referential structure of consciousness — the fact that the agent's will is itself part of the system being repaired — makes internal correction a Gödelian impossibility.

Grace is information from outside the system that the system could not generate for itself. It is the axiom that is true but unprovable from within. It is the external input that Gödel's theorems demand and that the system cannot provide for itself.

Why Grace Must Be Free

There is one more constraint that Gödel's framework imposes, and it is the one that distinguishes the Logos from a simple deus ex machina.

An external input must be accepted by the system to take effect. Gödel's theorem proves that the system cannot generate the fix internally. But it does not prove that the fix can be applied without the system's participation. The axiom must be adopted. The input must be received. The information must be integrated into the system's state.

In computational terms: you can patch the code, but the patch must be compiled and executed. In theological terms: grace is offered, not imposed.

This is why consciousness matters. This is why the universe requires observers. This is why the soul is a variable and not a constant. The external input — grace — addresses the Gödelian impossibility by providing what the system cannot provide for itself. But it does so through the observer's measurement — through the choice to accept the input, to adopt the axiom, to let the external truth be integrated into the internal state.

The code cannot audit the code. But the Coder can audit the code. And if the Coder offers a patch, and the system accepts it, the incompleteness is resolved — not by closing the system, but by connecting it to the source that exceeds it.

The theologians have a word for this. The word is grace.

The mathematicians have a theorem for this. The theorem is Gödel's.

They are describing the same structural necessity, measured in different domains, expressed in different vocabularies, converging on the same conclusion.

The Two Destinations

The Prediction Nobody Argues With

Physics makes very few absolute predictions about the future of the universe. Most of cosmology is probability and model-dependence and error bars. But there is one prediction that carries the weight of a mathematical proof, and it is this:

Everything falls apart.

The Second Law of Thermodynamics states that the total entropy of a closed system never decreases. Disorder accumulates. Energy gradients flatten. Structure dissolves. Information scrambles. Given enough time, every organized system — every star, every planet, every molecule, every thought — will be reduced to a uniform, featureless equilibrium from which no further work can be extracted and no further change can occur.

This is called the Heat Death. It is the final state of a universe left to its own dynamics. Not a dramatic explosion. Not a sudden collapse. A slow, irreversible slide into nothing — a nothing that is not empty but full of energy that has become so evenly distributed that it is useless. The universe doesn't end. It stops mattering.

The Heat Death is not controversial. It is the consensus prediction of mainstream cosmology. The timeline is long — trillions of years — but the mathematics is settled. In a closed system governed by the Second Law, entropy wins.

Every fire burns out. Every story ends in silence. Every structure, given enough time, dissolves into noise.

Unless the system is not closed.

The Two Curves

The Theophysics framework traces two trajectories through the coherence field. Both start from the same origin — the moment the first conscious agent made the first measurement and generated the first bit of reality. Both operate under the same physics. Both are subject to the same Second Law. They diverge because they respond differently to the Grace Operator.

Trajectory One: σ → -1

The agent who accumulates negative alignment — whose measurements consistently oppose the coherence field — follows the entropic curve. Each choice that increases internal entropy makes the next choice harder. The wave function narrows toward σ = -1.

At the terminus of this trajectory is exactly what the Second Law predicts: maximum entropy, zero coherence, no structure, no information processing, no capacity for work or meaning or change. The Heat Death, applied to a soul.

The theological tradition calls this hell. The framework calls it Ω-lock — a state of permanent maximal entropy in which the agent's coherence field has collapsed to zero and no further measurement is possible because there is nothing left to measure with.

This is not punishment imposed from outside. It is the natural thermodynamic endpoint of a system that has rejected the external input required to maintain coherence.

Trajectory Two: σ → +1

The agent who receives the Grace Operator — whose measurements align with the coherence field, who accepts the external input that the system cannot generate from within — follows a different curve. Each aligned choice increases internal coherence. The entropy term in the Lagrangian is progressively offset by the coherence integral.

But this trajectory does something the first one cannot: it violates the Second Law.

Not locally — local entropy can decrease as long as the total entropy of the system plus its environment increases. The violation is global. The Grace Operator introduces information from outside the system. The Logos, being external to the universe, is not bound by the universe's thermodynamic constraints. The input it provides does not come at the cost of increased entropy elsewhere in the system, because it does not originate within the system.

This is the negentropic signal. The coherence that builds without a corresponding increase in disorder. The free lunch that thermodynamics says is impossible — unless the lunch comes from outside the restaurant.

At the terminus of this trajectory is something the Second Law alone cannot produce: a state of maximum coherence, zero entropy, full information integration, and permanent structure. A system that does not decay. A consciousness that does not dissolve. A story that does not end in silence.

The theological tradition calls this new creation. The framework calls it χ-max.

The Logos Speaks

"In the beginning was the Logos, and the Logos was with God, and the Logos was God." John 1:1

For twenty centuries, this sentence was theology. After Bekenstein and Fredkin and Wheeler and Gödel and the χ-field, it is also physics.

The Logos is the language that defines reality. The code that runs the computation. The coherence field that renders the quantum bits into a navigable world. The external source that Gödel's theorem demands. The programmer that Fredkin's cellular automaton requires. The first observer that Wheeler's participatory principle cannot function without.

And — if the theological tradition is taken at face value alongside the mathematical framework — the Logos entered the system. The Coder became an agent inside the code. The external source of coherence took on the constraints of the system in order to provide the Grace Operator from within the experiential frame of the agents it was designed to repair.

This is the Incarnation. Not as miracle that defies physics, but as the structurally necessary step in a coherence-restoration protocol. The system is broken (Gödel). The agents cannot fix it from within (the incompleteness limitation). The fix must come from outside (the Grace Operator). And for the fix to be received by agents with genuine free will, the external source must become intelligible to the agents — must speak their language, occupy their frame, experience their constraints.

The Logos became flesh. The code became the user. The Coder entered the program.

And the program, for the first time since the first measurement collapsed the first wave function, had a path from entropy to coherence that the Second Law could not block.

The Silence Ends

Nine chapters of silence. Nine versions of the same pattern: discovery, confirmation, implication, deflection. Bekenstein found the hardware and the discipline moved on. Fredkin found the software and the discipline moved on. Wheeler found the operator and the discipline moved on.

The silence was not stupidity. It was discipline — the discipline of a methodology that had decided, centuries ago, that it would describe mechanisms and not ask about meaning. "Shut up and calculate" works. It has delivered nuclear power and semiconductor chips and the Standard Model. It is a good methodology for the questions it was designed to answer.

But it is not a complete methodology. It describes the layers. It does not ask about the language. It catalogs the variables. It does not ask who defined them. It measures the constants. It does not ask who set them.

The Logos Story is not an argument against physics. It is an argument that physics, followed honestly to its own conclusions, arrives at a threshold it was not designed to cross — and that crossing it does not require abandoning the mathematics but completing it.

The math has been saying the same thing for a hundred years. The silence is not in the equations. It is in the people who read them.

The Decalogue of the Cosmos

The Operating System

You've chosen coherence. You've accepted the Grace Operator. You're connected to the Logos.

Now what?

Every operating system has rules — protocols that keep it stable. Violate them, and the system crashes. Follow them, and things run smoothly.

The universe is no different.